A/B testing is common practice and it can be a powerful optimization strategy when it’s used properly. We’ve written on it extensively. Plus, the Internet is full of “How We Increased Conversions by 1,000% with 1 Simple Change” style articles.

Unfortunately, there are experimentation flaws associated with A/B testing as well. Understanding those flaws and their implications is key to designing better, smarter A/B test variations.

Table of contents

Where A/B Tests Are Going Wrong

Most flaws fall within one of these three categories…

- The variation doesn’t address actual causes. Through qualitative user research, buyer intelligence and quantitative conversion research, you’ve found some issues with your site. For example, you know too few visitors are submitting your lead gen form. But the variation you create assumes the wrong cause of the issue. For example, you assume it’s too many required fields when really it’s a technical error.

- The variation is based on a hunch. A/B testing will tell you which variation is better, but if those variations are based exclusively on internal opinion and guesses, you’re missing out. The best possible variation might not be present yet.

- The implementation is poor. Each variation is merely an implementation of a hypothesis. Who says it’s the hypothesis that’s wrong and not just that particular implementation? Often, you’ll need to try multiple implementations to get it right.

Failure to identify and mitigate these flaws will result in simply throwing A/B test ideas at the wall and hoping something eventually sticks.

Stop Throwing Ideas at the Wall

Of course, throwing ideas at the wall is never a smart strategy, but that’s especially true for A/B test ideas. Here’s why…

- Traffic is a scarce resource. The sample size you need to run a meaningful A/B test will surprise you. You don’t have traffic to waste on flawed A/B test ideas.

- User abandonment does happen. If you’re constantly running flawed variations, your visitors are being served a less-than-optimal experience. While you’re waiting around hoping you get lucky, your visitors are getting more and more annoyed. Eventually, they will call it quits and leave.

- Time is a scarce resource. Your annual test capacity will surprise you. You can only run so many tests per year. You don’t have time to waste on flawed A/B test ideas, especially if you rely on designers and developers to assist you.

The best way to identify and mitigate these flaws is via UX research. UX research will help you better understand the causes of conversion issues and prioritize hypotheses. The result? Smarter A/B tests, less wasted traffic, less wasted time, more revenue.

UX Research Methods That Can Make You a Smarter Optimizer

First, it’s important to understand the definition of UX, which Sean Ellis of GrowthHackers.com explains well…

Sean Ellis, GrowthHackers.com:

“When we talk about user experience (UX), we are referring to the totality of visitors’ experience with your site—more than just how it looks, UX includes how easy your site is to use, how fast it is, and how little friction there is when visitors try to complete whatever action it is they’re there to complete.” (via Qualaroo)

Researching how visitors experience your site, what their motivations are and what problems arise will help inform your optimization efforts. It’s all about understanding the expectation-reality gap, what causes it and what might help close it.

While there are many different UX research methods out there, there are a few that are particularly helpful for optimizers.

1. Investigating Intent and Points of Friction

As mentioned above, the expectation-reality gap is where your research will be focused. To understand the gap, you need to research visitor intent (expectation) and identify the various points of friction (reality).

Start by researching visitor intent and combining that with business intent. Often, as Sean explains, optimizers focus on goals and requirements when designing variations. In reality, variations should be designed based on intent…

Sean Ellis, GrowthHackers.com:

“Projects usually begin with design briefs, branding standards, high-level project goals, as well as feature and functionality requirements. While certainly important, these documents amount to little more than the technical specifications, leaving exactly how the website will fulfill the multiple user objectives (UX) wide open.

By contrast, if you begin by looking at the objectives of the user and the business, you can sketch out the various flows that need to be designed in order to achieve both parties’ goals. The user might be looking to find a fact, order a product, learn a skill, download a document, and so on. Business objectives could be anything from getting a lead, a like, a subscriber, a buyer, and so on. Ideally, you’ll design your flow in a way that meets both user and business objectives.” (via Qualaroo)

After you fully understand your visitors’ intentions and their objectives, and how they relate to your own, you can move on to friction. That is, exploring the answers to questions like…

- Which doubts and hesitations did you have before joining?

- Which questions did you have, but couldn’t find answers to?

- What made you not complete the purchase today?

- Is there anything holding you back from completing a purchase?

- What made you almost not buy from us?

- Were you able to complete your tasks on this website today?

How can you conduct this type of research? Primarily through customer and on-site surveys, which you can read about extensively in How To Use On-Site Surveys to Increase Conversions and Survey Design 101: Choosing Survey Response Scales.

You can also turn to reviewing live chat logs and talking with your customer support reps. Finally, you try customer interviews. Depending on your objectives, you might want to interview new customers, transitioning out customers, repeat buyers, etc.

2. Getting to the Root of the Issues

To start, get a pulse on your usability with a benchmark usability study. If you’ve never conducted a benchmark usability study before, Nadyne Richmond, senior manager of UX at Genentech, shares her process…

-

Choose tasks that are core to the site / application.

-

Use metrics such as time on task, success rate / failure rate, number of user errors, number of system errors, satisfaction rating.

-

Have the user simply perform the tasks, not think out loud. Ask them to read the tasks out loud and verbally confirm when they are finished.

-

Ensure participants are part of your target audience. If you use personas, recruit an even mix.

-

Since this is primarily a quantitative study, you’ll need a larger number of participants. Calculate the sample size ahead of time based on the number of tasks, the number of personas, etc.

Why is this important? Well, as Scott Berkun notes, a surprising number of visitors will fail to complete tasks that optimizers consider “core”…

Scott Berkun, Author:

“Most benchmark studies center on the basic usability study measurements:

- Success/Failure within a time threshold

- Time on task

- # of errors before completion

For most web or software interfaces, for most people, a surprisingly large percentage of people will fail to complete some of the core tasks. This is often a shock to managers and engineers, who tend to assume that most people can eventually do everything the product or website offers.” (via ScottBerkun.com)

It’s important to understand how the variations you’re creating to improve UX and conversions are actually impacting UX, which is why it’s advisable to spend the time on the benchmark usability study. Generally, as UX improves, your conversion rates will too.

Remember that for every core task, you should set a goal. Here’s an example from Scott…

Scott Berkun, Author:

“Typical goals should look something like this:

Task: Complete a purchase of 3 (or more) items from the shopping cart page.

Goal: 75% of all participants will be able to complete this task within 3 minutes

Fancier goals can include different sub-goals for novice, intermediate or advanced users (as defined by the usability engineer) or detailed goals for errors or mistakes. If it’s the first time you or your organization are doing this, I recommend keeping it as simple as possible.” (via ScottBerkun.com)

Initially, your goals will be relatively arbitrary unless you benchmark your competitors’ usability as well. As time goes on, you simply set realistic improvement goals.

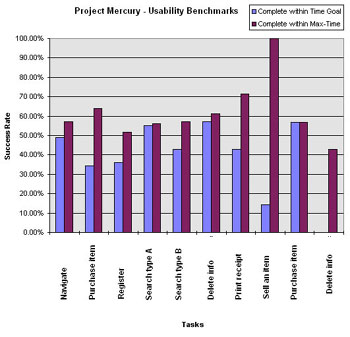

When reporting the results of your benchmark usability study, start with the goals and how many people were able to achieve those goals. Here’s a visual example from Scott…

Once you have a benchmark, you can start exploring specific UX issues, their causes and your resulting hypotheses. The best way to do that is through user testing, which can be either moderated or unmoderated.

Jerry Cao of UXPin summed up the difference perfectly…

- Moderated user testing is recommended during early stages of development, for advanced or complicated sites / products, and for products with strict security concerns.

- Otherwise, unmoderated user testing saves time by allowing you to test hundreds of participants, allows for more natural product usage (due to environment), and saves money by cutting equipment and moderator costs.

Unmoderated user testing is growing in popularity thanks to tools like UserTesting, but be aware that leading questions that pollute data are still a problem, even without a moderator present…

Jessica DuVerneay, The Understanding Group:

“Leading language is using a specific word or term to directly tell users to complete a straightforward task in a way that minimizes thinking, problem solving, or natural behaviors.

Consider this example: If a test needs to establish that a user can follow a company on Twitter, which of these would be better to ask?

- “From the home page, please follow Company X on Twitter.” (Most Leading)

- “What social media options are available for Company X? Please use the one that is most relevant to you.” (Less Leading)

- “If you were interested in staying in touch with Company X, how would you do that? Please show at least two options.” (Least Leading)

The answer is – it depends. Option A is perfect if you are testing the findability of a newly designed Twitter icon from the homepage. On the other hand, option C would be more appropriate for testing preferred user flows or desired functionality and content.” (via UserTesting.com)

You also have heat maps, click maps and scroll maps at your disposal to better understand issues and ensure you’re not designing variations for fictional causes.

3. Testing Your Findability

As Linn Vizard of Bridgeable explains, clear information architecture (IA) is key to good navigation, which is key to conversions. After all, if visitors can’t navigate your site to find what they’re looking for, they probably won’t convert.

Linn Vizard, Bridgeable:

“Good navigation relies on having very clear information architecture (IA) in place. IA is the structure or way that the site is organized. Part of great IA is about sorting content types into buckets which match the user’s mental models of how the site should work. Think about the types of things users might expect to see when landing on your site. Search analytics can help to identify what words they are using.” (via Adobe Dreamweaver Team Blog)

When optimizing your navigation, focus on keeping it simple by reducing options where possible, using clear labels, creating enough contrast between the site and the navigation, and using clear “you are here” indicators.

As Linn explains, you’ll want to focus on optimizing for those tried and true principles instead of going for a radical change…

Linn Vizard, Bridgeable:

“Above all, navigation is the primary means that people will use to wayfind on your site. It is crucial that it works well. Users need to be able to quickly locate themselves on the site, and figure out how to get to where they want. Spending time navigating by guesswork or trial and error will frustrate people, who will often resort to using Google to get to what they need. Bearing in mind navigation best practices will improve the chances of creating a good experience for your users. Navigation is not usually the place to innovate – stick with what works!” (via Adobe Dreamweaver Team Blog)

Prototypes matter and visitors are used to navigating sites a certain way. Hell, even icons and hamburger menus can still be risky.

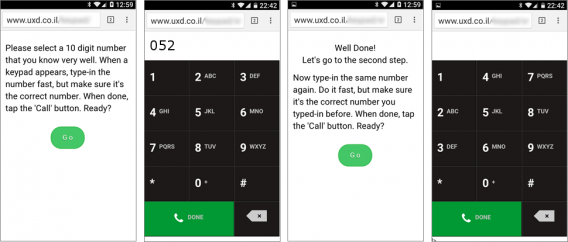

Shay Ben-Barak of User eXperience Design conducted an expert to see just how important prototypes are to visitor performance…

On one side, you have the standard dial pad (read left to right). On the other, the numbers are rearranged (read up to down). How much harder do you think it was for people to dial a 10 digit telephone number they know off by heart on the rearranged dial pad?

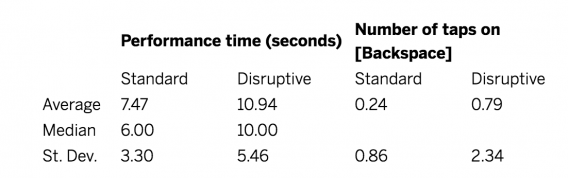

Well, here are the results…

As Shay explains, patterns and prototypes are difficult to change…

Shay Ben-Barak, User eXperience Design:

“From the above results, we can conclude that a non-standard keypad – that is not very different from the standard one in layout and in logic – can lead to an increase of 30%-50% in performance time, and can significantly increase the likelihood of mistakes – both noticed and corrected and unnoticed.

When users are accustomed to using a pattern, even a minor change in that pattern can be very expensive in performance terms. In fact, the minor change is confusing in two ways: (1) since the difference is minor, it can go unnoticed, hence , and (2) since the user is already well-trained with the standard pattern, their knowledge masks the new pattern and inhibit learning.” (via UX Magazine)

So, before you start A/B testing anything related to IA, be sure that findability is truly an issue and not another UX factor.

Using Tree Tests to Measure Findability

Tree tests make it possible to measure findability on your site. Jerry explains how they can be used to measure the effectiveness of your IA…

Jerry Cao, UXPin:

“In a nutshell, a tree test tasks participants with finding different information on a clickable sitemap (or “tree”). Using a usability testing tool like Treejack, you then record task success (clicking to the correct destination) and task directness (certainty of users that they found what was needed). This shows you the effectiveness and clarity of your information architecture.” (via The Next Web)

Jeff Sauro of MeasuringU does a great job of explaining why you would want to run a tree test as an optimizer, so I won’t reiterate…

Jeff Sauro, MeasuringU:

“Tree tests are ideally run to:

- Set a baseline “findability” measure before changing the navigation. This will reveal what items, groups or labels could use improvement (and possibly a new card sort).

- Validate a change: Once you’ve made a change, or if you are considering a change in your IA, run the tree test again with the same (or largely the same) items you used in the baseline study. This helps tell you quantitatively if you’ve improved findability, kept it the same, or just introduced new problems.” (via MeasuringU)

So, run a tree test to find out if your current links, labels and hierarchies are performing well before you start experimenting.

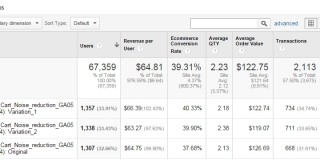

When you have a new variation that addresses IA issues, run the tree test again to see if the variation improves findability. If not, don’t bother running the A/B test. Go back to the drawing board and come up with a new hypothesis.

Jeff wrote a detailed article on how to conduct tree tests, which you can read here.

4. Conducting Quality Assurance

Conducting proper quality assurance on all variations is vital. Failure to do so can seriously pollute your data, leaving you with false conclusions about what works and what doesn’t.

For example, you might assume your hypothesis was incorrect, when really it would’ve increased conversions had their not been a technical error.

Take the time to conduct cross-browser, cross-device testing and speed analysis on your site now. Then, do the same for every variation you test.

We’ve written about this extensively in the “Technical Analysis” section of our Conversion Optimization Guide. I definitely suggest taking the 5 minutes to read through it.

Conclusion

When optimizers throw ideas at the wall and hope something sticks, they end up with flawed variations…

- Variations that don’t address actual causes of lower conversions.

- Variations that are created based on a guess.

- Variations that are implemented poorly.

The result is lost time, traffic and money. To mitigate this issue, you can…

- Use customer surveys and on-site surveys to identify intent and points of friction.

- Conduct a benchmark usability study to understand how your variations impact UX (and thus, conversions).

- Use moderated or unmoderated user testing to explore specific UX issues and their true causes.

- Navigation and IA are closely related. Focus on mastering the tried and true navigation principles. Then, conduct tree tests to measure the effectiveness of your IA (and how your variations impact IA).

- Conduct quality assurance, including cross-browser testing, cross-device testing and speed analysis.

If you’re interested in UX research, check out CXL Institute and the original UX studies we post every week.

Some really interesting facts here thanks. Still many sites making obvious errors like using the text submit in their buttons.

Thanks Daniel. I really appreciate you taking the time to read and comment.

Love this! So timely. Breaking out the drawing board right now to lay out some UX treemaps and testing ideas for a site redesign project. Can’t wait!

Awesome! Glad I could help, Greg. Good luck.