A key part of optimizing your site for Google is making it easier to reach by crawlers, although the “3-click distance” rule of thumb is rather arbitrary and there’s no one-size-fits-all for site architecture.

However, reducing crawl depth in the right cases can greatly improve SEO performance. For us, it led to a 23% increase in organic traffic.

Want to read the Fast Marketing tactic on this topic? Skip directly to it here.

Get tactics like these straight to your inbox: sign up for the Fast Marketing Newsletter by CXL.

Table of contents

How distance from the homepage can hurt crawling

By Pablo García, Content Lead at CXL.

Your site architecture can seriously impact how search engines crawl each page. If several of those pages aren’t directly liked to and from the homepage, it’s tougher for search engines to spot them.

The case may become a serious technical SEO problem if there are thousands of URLs that are hard to reach for crawlers.

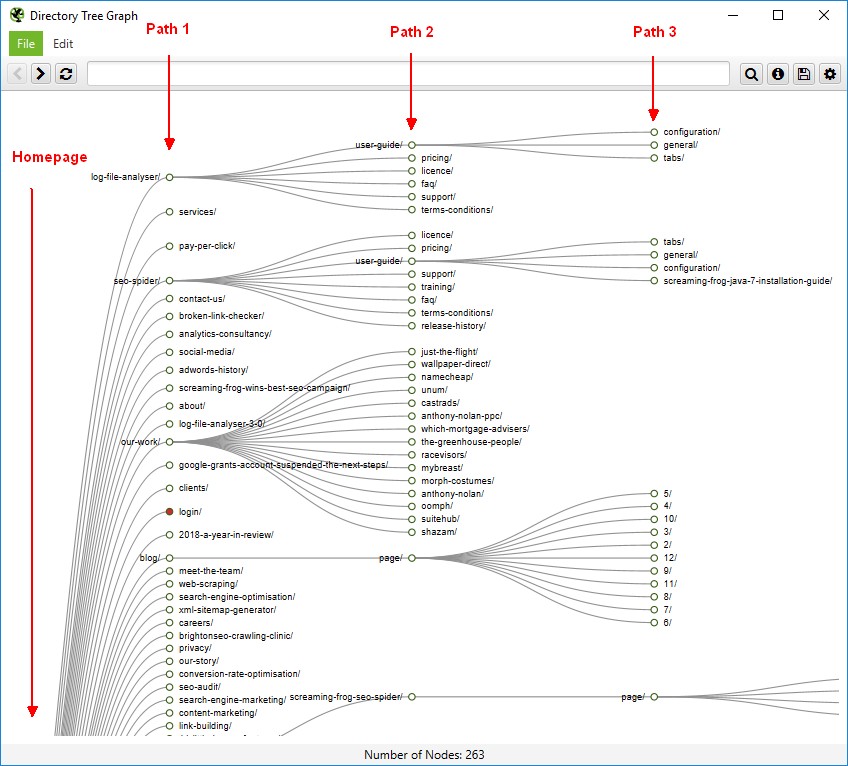

That reach can be measured with crawl depth, which refers to the distance from the root of a website’s architecture (normally the homepage) to a specific page and is counted by the number of clicks required to go from the root to the specific page.

Image source

Due to this, when conducting a technical SEO audit, you’ll rarely have trouble with indexing the homepage or having it crawled again after you edit some on-page or off-page elements. They have a shallow crawl depth, as opposed to pages that are many more clicks away and that lack internal links.

Web pages that aren’t clearly connected to the homepage are harder for search engines to identify. For sites with complex architecture and too many deep levels, some pages may not fit Google’s crawl budget for the specific site, or the number of pages the Googlebot crawls and indexes.

This crawl budget is bigger for popular sites with more organic traffic and links, as if Google got the signal that a site scaling organic traffic deserves more time being explored and crawled.

The SEO impact of poor site architectures

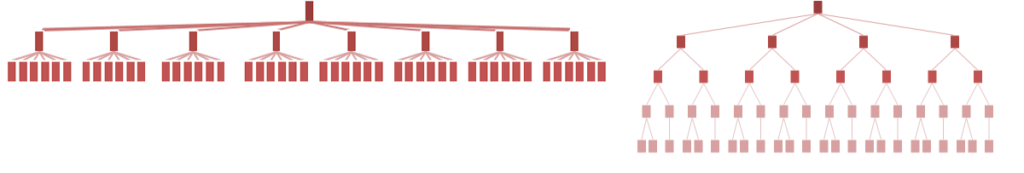

Site architecture can be more flat or deeper depending on how content is structured. In flat hierarchies, a few vertical levels serve as a path to a higher number of pages each. In deep hierarchies, the same number of pages is divided across many more vertical levels.

Image source: Nielsen Norman Group

For crawlers, flat and shallow hierarchies are easier to navigate, while discovering content gets tricky when it’s buried under many layers.

An old rule of thumb is that, to make reaching those deep pages easier, you should ensure most content is no more than three clicks away from your homepage. This may require creating pillar pages that receive link equity from the homepage and, at the same time, provide access to pages in the lower levels.

However, for users, who don’t directly see a graph of your information architecture (unless they peek at the sitemap), clear categories are easier to navigate, having a great impact on their experience.

Following the 3-click distance rule blindly can lead to overly broad navigation with categories overlapping, confusion, and overwhelming menus.

Here’s what John Mueller shared on crawl depth and site structure:

Google should be able to crawl from one URL to any other URL on your website just by following the links on the page.

If that’s not possible, we lose a lot of context. If we only see your URLs through your sitemap, then we don’t really know how they are related to each other, and can’t understand how relevant is a piece of content in the context of your website.

John Mueller, Senior Search Analyst at Google

Should you use a flat or deep hierarchy for your site?

Although flat hierarchies seem to be the easiest to discover and explore by crawlers, they might not convey information in the clearest way, which can harm user experience in the end. The answer to this question comes down to the content categories that your site covers.

As Nielsen Norman Group explained:

Flat hierarchies tend to work well if you have distinct, recognizable categories, because people don’t have to click through as many levels. When users know what they want, simply get out of the way and let them find it.

But there are exceptions to every rule. In some situations, there are simply too many categories to show them all at one level. In other cases, showing specific topics too soon will just confuse your audience due to the lack of context.

Kathryn Whitenton, Senior UX Designer at Power TakeOff

Increasing organic traffic by displaying more posts on the blog page

By Bill Gaule, Senior SEO Strategist at Contact Studios.

Originally published in the Fast Marketing newsletter.

By displaying more blog posts on the blog page for our SaaS client, Contact Studios was able to increase organic traffic by 23%.

Here’s exactly how we did it:

TL;DR for this marketing tactic

- The company: SEO and content marketing agency Contact Studios, working for a SaaS client.

- The goal: Drive SEO traffic to the blog posts.

- The tactic: Increase the number of blog posts displayed on the blog page.

- The result: 23% increase in organic traffic to the blog in 30 days.

What’s the fast marketing tactic?

Our team at Contact Studios increased the number of blog posts displayed on our client’s blog page from 9 posts to 99 per paginated page.

This brought all blog posts closer to the homepage, reducing crawl depth and increasing link equity for all posts.

What was the result?

In the 30 days following this one change, the blog traffic increased by 23%. That’s almost 7,000 extra clicks month on month.

If you want to sharpen your technical SEO knowledge, check out the Technical SEO course.

Do you want more tactics like these?

- Sign up to our Fast Marketing Newsletter to get these straight to your inbox.

- Read how to increase Black Friday sales with an early-bird list (and generate $26K in revenue).

- See all fast marketing tactics.