Both your visitor and Google prefer your site to be fast. Increasing site speed has been shown to increase conversion rates as well as increase SERP rankings, both resulting in more money for your business.

You’re doing A/B split testing to improve results. But A/B testing tools actually may slow down your site.

We researched 8 different testing tools to show how your site performance is affected by each one. We’ll outline the background, methodology, etc, but click here if you want to go straight to the results.

Background

1. Data Shows Faster Sites Convert Better

Visitors have a patience threshold. They want to find something and unnecessary waiting will make them want to leave. Just like in a any shop.

For you, that means a slower site is less revenue.

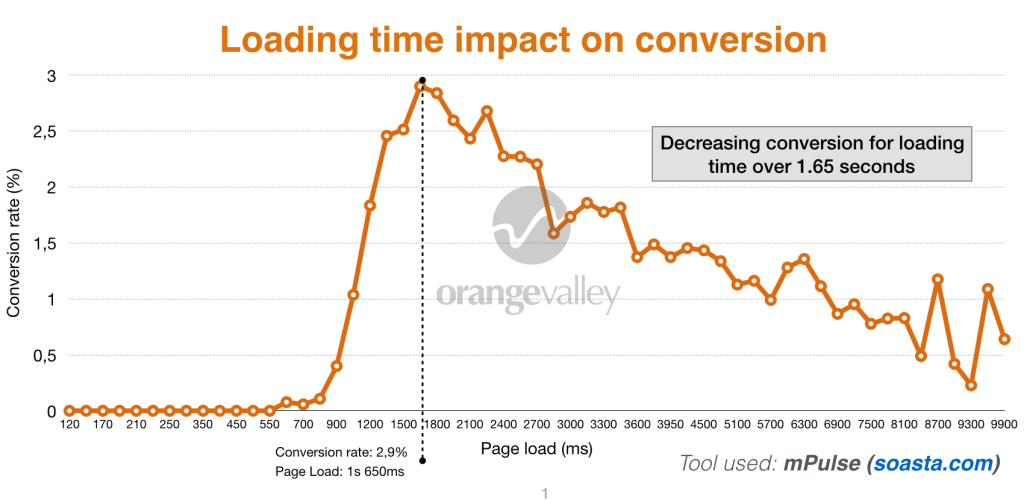

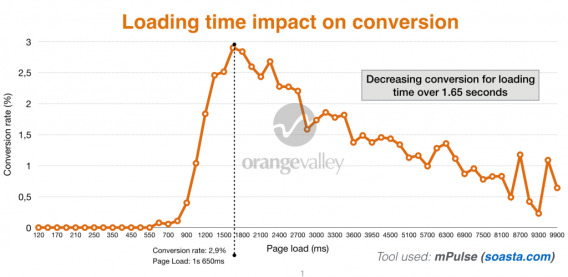

In the graph above you can see the relation (green line) between load time and conversion rate for one of OrangeValley’s customers. 1 second slower, 25% less conversions.

The relation between web performance and conversion has been documented in many studies. Google is also pushing for website owners to improve their site speed in their effort to improve the web experience.

2. How a Testing Tool Cost Us 12 Points On Google Page Speed

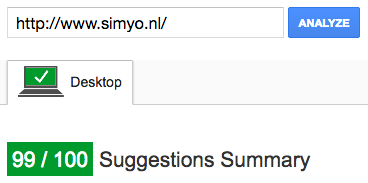

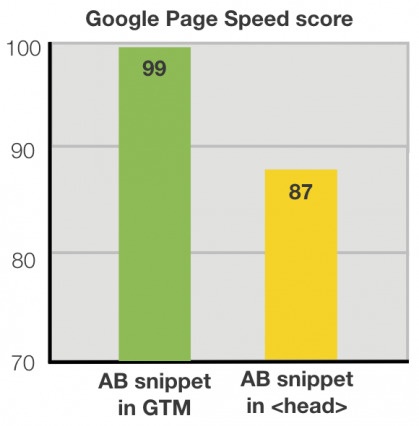

Simyo (telco company) had a 99/100 Google Page Speed score for their homepage. That was before implementing an A/B testing tool. After implementing the testing (according to the vendor’s best practices), the score dropped 12 points.

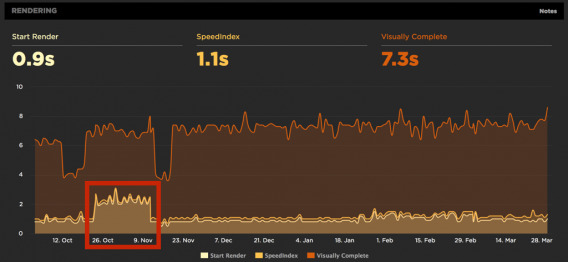

Here’s what happened to the Start render time when we implemented an A/B testing tool based on the recommended method of implementation of the vendor.

Implementing the A/B testing tool simply made their website slower than their main competitors, which affected their competitiveness on SEO as well as conversion.

3. How Testing Tools Can Slow Down Your Site

Most A/B testing software creates an additional step in loading and rendering a web page.

Many use a technology based on javascript that is executed on your visitor’s browser. This is called a client-side script. The page gets loaded and the script modifies the source code. This cannot be done until the elements that need to be modified are loaded and thus causes a delay.

That is why sometimes you can see elements changing on page as it is still loading.

As Brian Massey, Founder of Conversion Sciences, said:

Brian Massey, Conversion Sciences

“The flash draws attention to the element you’re testing, meaning that visitors who see your test will focus on that element. If the treatment wins, it will be rolled out to the site, eliminating the flash. The increase seen in the test can be completely erased. So, this is a pretty important issue.”

But that’s only part of the problem. The presence of such scripts can block the render execution of parts of your page, slowing it down even more.

Plenty has been written on this topic. Here’s a good article that goes into depth and a GrowthHackers discussion to follow.

Disclaimer and transparency

Changing an image and some text are part of many A/B tests, so it makes sense to use these simple changes as the basis of this study.

However, a tool’s performance will, of course, partly depend on the page you are testing and the type of experiment executed.

For research purposes, we needed to test the same thing (to isolate that variable). Perhaps other experiments could lead to different results, so it couldn’t hurt to test this for yourself.

At OrangeValley, we choose the tools our clients use on a case-by-case basis. As a result we mostly use Optimizely, SiteSpect (located in the same building we are in) and VWO – though sometimes we use other tools, too.

In late 2015, we were having issues with a client-side tool (flicker effect). Optimizing code and testing different implementations did not work, and a back-end tool was not an option. Since we did not have a plan C we decided to find out the very best client-side A/B testing tool when it came to loading time – so that’s why we decided to embark on this study (and share it with you!).

Vendor Responses

We also got responses and updates from some of the tools after the study was conducted.

Omniconvert increased the number of servers and added a layer of caching to serve variations (whether that be A/B testing or personalization) faster. They also speeded up the audience detection method which helps apply experiments faster. By now they also should have expanded into several specific regions allowing lower latency there.

VWO indicated they have a preference for asynchronous to make sure server down time could never lead to their customer’s website jamming.

They also pointed out that as we setup our study in ‘clean’ accounts, this is not the same as in fully operational accounts. With their technology only relevant experiments are loaded for a user whilst some other vendors load all experiments regardless of the experiment that needs to be applied for a visitor (e.g. Optimizely recommends archiving experiments when no longer used).

Given our ‘clean’ account setup VWO couldn’t benefit from their technology’s advantage in this study.

Lastly and importantly, they informed me that any images uploaded to their service are stored in the U.S. and not distributed over any CDN. This means that for VWO users outside of the U.S., it is probably better to store images on their own servers. Although in this study we consciously chose to test the ‘out-of-the-box’ service vendors provided, we do acknowledge this may have caused the background image to load slower than it would have when uploaded to a regional server.

Editor’s note: AB Tasty didn’t agree with the results of the study, so they’ve been removed. The integrity of the results still stands, independent of individual vendors’ reactions.

HP Optimost unfortunately did not respond to our email in which we shared their ranking outcome of this study. All other vendors we spoke to (SiteSpect, Maxymiser, Convert.com, Omniconvert) had no other remarks about the methodology of this study.

Methodology Summary

In this study we compared 8 different A/B testing tools on loading time experienced by your website visitors. The goal for us was to determine which alternative tool we should be looking at to minimize any negative effect.

Here’s the control:

And here’s the variation:

In the variation, we changed the title text and inserted a background image.

Here are the tools we studied:

Monetate and Adobe Test & Target are also known tools but were not included. We invite them to participate in any follow-up on this study.

Measuring performance

We used Speedcurve and our private instance of Webpagetest.org to conduct loading tests during a full week. These are widely used platforms among web performance experts.

All experiments were setup the same:

- same hosting server (Amsterdam)

- same location and speed settings (15Mbps down, 3Mbps up, 28 ms latency)

- same variation

- implementation according to vendor’s knowledge base recommendations

- background image uploaded to the tool when this option was available

- 80+ test runs per tool during a week to decrease the effect of potential outliers (in hindsight all tools showed stable measurements)

- The testing page was a copy of a customer landing page of which we modified the colors and text

- The time for the variation to show was measured for first views (the first impression a new user will get)

How we measured experienced loading time in an A/B testing context

In this study we look at the loading time of elements above the fold as this directly corresponds with experienced loading time. Total page load is not taken into account. We decided to adopt this approach as the WPO industry is moving more and more towards measuring what matters for user experience (critical elements showing up). People, after, all can start reading and get their first impression before the page is completely loaded.

What we really wanted to measure was when specific elements relevant to visitors are loaded – including any changes applied by the A/B testing tools, as this directly impacts experienced loading time by users.

Our challenge was that we needed to measure beyond loading the original elements – first, when elements were loaded, and second, when the client-side script (used by 7 out of 8 vendors) changed those elements in the A/B test.

In some cases the final text was directly visible, in other cases the original text was shown and then changed on-screen to the final text (flicker effect).

In the end we automated the process of analyzing the filmstrips in order to measure these changes on-screen for the user. This way we could measure when the variation text “How FAST is …[A/B testing tool]?” and the background were applied to the page.

Originally we intended to look at metrics such as Speed index, start render and visually complete to compare the tools. However, when we looked at the render filmstrips and videos it became clear that these data metrics were not really representative of what your site visitors would experience. In fact, one of the tools allows the page to start rendering very very soon, but what the visitor in the end sees in his/her browser turned out to be much slower.

Speedcurve does have a great solution to measure experienced loading time using custom metrics. Essentially you add scripts to elements on your page that are most relevant to the user when entering the page: Elements above the fold such as your value proposition and important call-to-actions.

In this case we needed to measure the changing text and background that were part of this experiment. Although we could measure the original headline with custom metrics, doing the same with the variant headline turned out to be more challenging.

Results: Top 8 fastest A/B testing tools

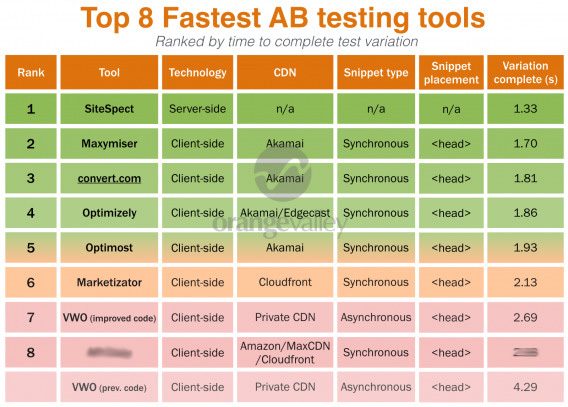

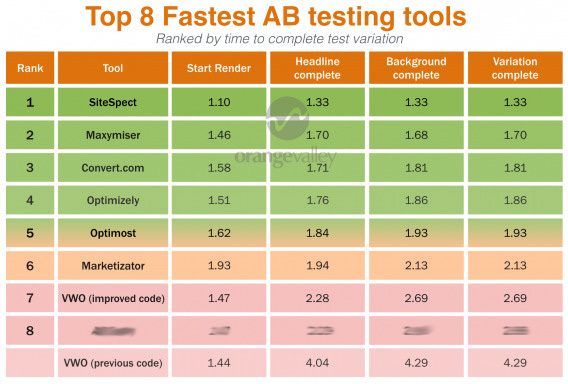

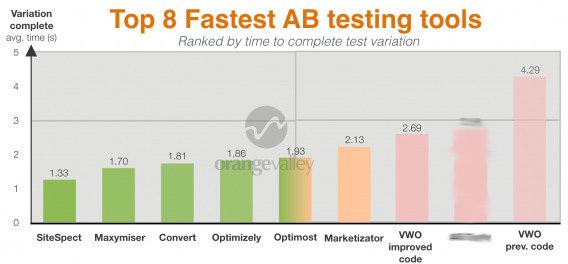

Here’s what we found:

And here’s a comparison of the tools in graph form…

Then here’s a video comparison of the tools’ loading times:

We hope the results we found were useful for you! Were you surprised by anything?

Conclusion: What to do with these results?

With clear performance differences between A/B testing tools, it’s wise to choose carefully. Lower performance can lead to lower conversion, test reliability and a drop in page speed won’t do good for your SEO position.

Don’t panic, like mentioned earlier not all sites are the same. Perhaps your site is even too slow right now to be slowed down by third-party tools. So make sure to get data on your site using simple tools such as Google Page Speed and Webpagetest.org and ensure that the tool you are using works properly on your site, or try to improve your setup.

We hope this research is useful for you. Feel free to share or comment below with your own experiences.

Update: After publication VWO contacted us once more. They informed us that the variation code was not optimal (read their post to learn more). We retested VWO and have now added this new strongly improved results in the tables and graphs.

Awesome Peter, this is a very important study and benchmark for the industry and never researched before (in the zone of 1 to 4 seconds load-time – previous studies usually start at 3 seconds). Happy to see Convert.com ended up no. 3 with plans that start at hundreds not thousands of dollars a month. I’d say we are the no. 1 in the most affordable tools and proud to be there.

Thanks Dennis. And congrats on that!

Any reason Adobe Target was not included? Did they decline to participate?

Hi Melana,

Thank you for your question. Originally we set out to do this study to help us deal with a challenge we faced with one of our customers. We did contact Adobe, but we had different opinions on how to move forward with a testing-only setup and thus decided to move ahead without Target. Perhaps in a next round they will be part of the test.

Best, Peter

This is really important research to have out there, and I’m kinda surprised that VWO is so slow.

In my experience, I try to do as much testing from the server side as possible. It takes quite a bit more effort to implement, but the user experience is soooo much better.

Thanks for this!

Eric – We investigated and found that the variation created was sub-optimal. It was configured to wait for the entire page to load (which takes around 2-3 seconds). Here is our complete reply: https://vwo.com/blog/how-vwo-affects-site-speed/

Hey, Peter!

Thanks for taking the time to test all the tools!

It was one of those good cold showers we’ve eventually gained from and made us push to make the improvements for a better response time at Marketizator, at only one week after you’ve made the test.

One thing to take into account about our platform though is that our users are actually gaining speed for their website if they are also running surveys or web personalization: they are making one single request instead of 3, to other separate tools which are offering only A/B testing, surveys or personalization.

Cheers and we are ready & eager for the next round :)

Hi Valentin! My compliments for how Marketizator has approached this.

Thanks for your insight! We do clearly see a trend in vendors adding ‘traditionally out of market’ features or new vendors coming into the market with a combination of features. Perhaps web performance is one of the drivers too? ;)

Any reason for not testing the client-side (non-redirect) version of Google Analytics Content Experiments? I know it isn’t the default snippet they give, but we found it to be quite fast in comparison to services like VWO.

We also regularly split our traffic server-side for tests and then just set the Content Experiment variant (for example when testing a navigation layout on multiple pages). This lets us segment and measure using the Content Experiement in Analytics, but without the client-side costs.

Just curious if you’ve tried this since most sites are already taking the hit on Google Analytics.

We actually wanted to test Google Optimize in the 360 suite but we were too early for that. I wonder if GA Content Experiments will be continued given their new developments actually.

Thank you for this contribution on the tools we have to use.SEO is a very important part of your positioning in google.SEO focuses on organic search results, ie, which they are not paid

I have a page for the orientation of your e-commerce.I hope and serve them helpful and thanks for your great contribution.

Interesting post. I do miss the Google Tag Manager as A/B test tool that Orangevalley sometimes promotes. Perhaps in a next round up?

About the flickering: I think you should hide all elements that are in the test until the changes are made (or not), and then reveal the elements. That also proves you always need a good client-side coder to do good testing with client-side tools.

Sounds like plan :-)

Yes, a good developer is definitely recommendable. A tool after all is just a tool!

Hi Andre, this is what Convert Experiments actually has as default setting. Time-out of 1.2 seconds but when DOM-elements changing is done release the “body hide” option and show it again.

Hi Peter,

Thx for your effort and sharing these results.

A remark about the test execution, concerning the snippet implementation: I think you should have implemented the synchronous version of the VWO snippet too just like the rest of the client-tools. Then the comparison would have been more equal, this despite their general advice to implement the a-synchronous version.

Selling online without keeping track on your e commerce performance metric is like driving with eye closed. Even if it’s just the most basic ones, you have to follow how you’re doing and compare progress over time.

We know it’s hard to decide, especially if you’re at the beginning of your entrepreneurial journey, what to monitor. That’s why we compiled this short guide to the most important KPIs in e-commerce to start with.

Average Acquisition Cost

This metric measures how much it costs to acquire a customer. What’s tricky is that you User Aquisitionpay for traffic, but not all of it converts to customers.

If you want to ever make a profit, you have to optimize your acquisition channels so you only pay for quality traffic and keep cost in check.

Sometimes, less traffic with higher conversion rate may prove more profitable than huge traffic that barely converts.

Analyze all acquisition channels you have – social media, ads, review sites, referrals, etc. to evaluate which ones really make the difference for your business. Spending your marketing budget in the right places means you’ll be paying only as much as you can afford for acquisition.

Make sure you know what that maximum cost is and never exceed it. Otherwise, you might actually be losing money in the effort to bring in new customers.

Also, remember to segment costs by location, because prices are different for Europe, North America and the rest of the world.

<a href=”https://www.metrilo.com/“>Ecommerce Business

Great study but to not include Adobe Test and Target and Google Optimize is a serious misstake in my opinion, at least if the data presented here should be of use for those of us comparing enterprise solutions.

My suggestion would be to update this article with those included, then this would truly be worth a bookmark.

Best,

Jacob

Hi Jacob, thanks for your comment. Having them included would be great. In another round of testing we’ll try to include Adobe again. When we did this study Google Optimize was announced but not available yet. Of course we’ll seek to include them next time.

Thanks for the study.

Short Disclaimer: I work for Optimizely. I wanted to add a link to a post we did about testing and response time. It shows why one should not focus on average response time but instead on the slowest 1%. It also highlights how we reduced response time globally by 42% to the slowest 1% of response times by introducing a balanced CDN architecture: https://blog.optimizely.com/2013/12/11/why-cdn-balancing/ I hope this is useful.

Hi Fabian, thanks for your comment. As noted in your post the advantage of an average is that it is easier to understand.

If you look at the linked graph below from your post you’ll see that the average improved significantly as well thanks to your optimization purposes.

https://blog.optimizely.com/wp-content/uploads/2013/12/CDN-response-time-single-v-balanced.png

That’s what we look at in this study which we communicated in advance with your colleagues at Optimizely and all other vendors.

I think it would be a good idea for the experiment to be re-run considering the responses from the a/b testing companies – some of which have written in-depth responses as to why this experiment doesn’t make sense.

https://vwo.com/blog/how-vwo-affects-site-speed/

It appears your setup wasn’t optimised for some of the particular tools in use. Does this not leave your test fundamentally flawed?

With such a big part of CRO being about mitigating bad experiments and understanding data, I’m surprised to see what look like a poorly constructed experiment appear on CXL.

I am in touch with Peter, and he is doing a re-run of this study. According to him, the new results should be out in a day or two.

The blog post by VWO is about the setup for their tool in particular and not about the general methodology. As Sparsh noted I’m already working on a re-run for VWO to improve that part.

In fact VWO was the only vendor with a legit concern about the research. Most vendors has no critique but were just curious how the specifics.

That’s good to know guys!

Regardless of whether the other platforms complained or not, including bad data misrepresents/compromises any result – I think we can all agree on that. In this particular case, it could influence business decisions that are not truly valid.

Maybe in the future it would be worth consulting the platforms before publishing such an article.

I look forward to the updated results.

Thank you for your suggestion. That’s right, and that’s why we spoke to to 3 people from VWO before the publication. So in the future we’ll give vendors the opportunity to create the test themselves to further ensure all is okay.

The updated results are shared with XL, so they’ll be up fairly soon I think.

Sounds like a good plan Peter.

Peter,

I’d be interested to see the difference in load time when using these tools to redirect landing page. Some of these like VWO and Optimizely although they are great tools some of our variants we have to run as redirect and that load time seems to be even slower since the user sees the baseline for a brief second before hitting the other page.

Also, when looking into SiteSpect we found that although it is a great product we could not give them access to our servers. I would note that fraud prevention and thirty party integrations for some businesses outweighs load time. We generate at least a million leads a month and handle a lot of PII, so the script is more secure for us.

As far as companies arguing the set up of the test and it being flawed. All test can be flawed in some way, but it doesn’t discredit the obvious results. Server-side technology is the clear winner when we are talking about variant load time. I’ve used and tested more than a few of these products and your results make sense to me. This test raises so many more questions than load time differences. The set up and implementation of each product varies and some are more intuitive than others.

Thanks for your hard work on this!

Hi Evette, you’re very welcome and thanks for your insights!

Testing redirects is a good idea, so we’ll look into including that aspect next time. Also, feel free to reach out and share the new questions that got raised beyond the topic of loading times.

Speed issues etc. remind me of some battles here at Xpenditure between marketing and development about the speed of our application :) We tried several tools but still prefer VWO even though it finishes last here in the test.

We did remove it from our app itself and only use it on our website, which makes it harder for setting up a test and have all tracking in place (create custom segments in Analytics, set up Triggers and Vars in Tag Manager, etc.).

For application A/B tests we took a look at Fairtutor.com :) Doesn’t look like one of the fanciest tools, but it allows our developers to implement A/B tests directly in their native code without any speed issues.

Thanks for doing this research. Performance of tools is an issue many companies struggle with.

I have to agree with Fabian from Optimizely regarding his point about averages. I have seen some great averages, with about 10% of loads being extremely slow, forcing us to timeout the solution. It would be great if you could give us some insights in segments of performance, not just averages. Especially Optimizely (sorry Fabian), has been performing less than optimal for some clients when looking past the averages.

I am missing a disclaimer. The writer of this article has a commercial interest in Sitespect. Sitespect HQ is based in the office of the agency he is working for and the director of Sitespect is the co-founder of this agency. This should have mentioned in the article. Nevertheless, an article worth reading.

Yes, fully agree – this study should be done by a company like TNO

Visitors have a patience threshold. They want to find something and unnecessary waiting will make them want to leave. Just like in a any shop.