Just when you start to think that A/B testing is fairly straightforward, you run into a new strategic controversy.

This one is polarizing: how many variations should you test against the control?

Keep reading

The traditional (and most used) approach to analyzing A/B tests is to use a so-called t-test, which is a method used in frequentist statistics.

While this method is scientifically valid, it has a major drawback: if you only implement significant results, you will leave a lot of money on the table.

Keep reading

When internet users share private information, they want to feel safe doing so.

One of the most popular ways to convey security on a website is by using trust badges (also referred to as “trust logos” or “site seals”).

This study, conducted by CXL Institute, expands on existing research from Baymard Institute’s research in 2013 to better understand the popularity and efficacy of various trust badges online.

Keep reading

Right now there is almost certainly an enterprise exec in a boardroom somewhere saying, “We need it to go viral.” Kittens and memes and babies kissing puppies… viral.

When most people think about going viral, they think about raising a lot of awareness for their product or company. But what about money in the bank, what does going viral mean for your bottom line?

So, the statement becomes: We need to go viral in a way that makes us actual money. Not surprisingly, that usually looks a little different than kittens and memes and babies kissing puppies.

Keep reading

If you’re doing it right, you probably have a large list of A/B testing ideas in your pipeline. Some good ones (data-backed or result of a careful analysis), some mediocre ideas, some that you don’t know how to evaluate.

We can’t test everything at once, and we all have a limited amount of traffic.

You should have a way to prioritize all these ideas in a way that gets you to test the highest potential ideas first. And the stupid stuff should never get tested to begin with.

How do we do that?

Keep reading

A Rejoiner study found that over 50% of the cart abandonment emails they send are opened on a device that is different than the one the customer originally abandoned on.

A typical situation is a person browsing your site on mobile, perhaps adding items to their cart as a “wishlist”, then never completing their purchase.

Wouldn’t it be great if you could email them with the exact items they’d left in their cart, and restore their cart with those items, no matter what device they use when they click through your email reminder?

That’s the beauty of cart regeneration, a feature that online retailers often overlook in their cart abandonment email strategy. It’s key to boosting online sales.

Keep reading

Everyone has heard a horror story about a website redesign. But there are still times when they are necessary or beneficial.

Deciding when is the right time is one important aspect of doing a redesign well. The other aspect lies in the process itself.

We can dramatically improve the performance of website redesign projects when we used a structured approach and we start testing all our assumptions.

Keep reading

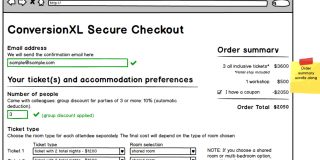

If you’re part of a conversion optimization team, a big part of that job is communicating treatments to other specialists on your team (analysts, designers).

Depending on the scope of the changes, you could use a few different tools and methods.

Almost always, though, this includes wireframing – and it helps to be able to do wireframing decently well.

Keep reading

A/B testing is common practice and it can be a powerful optimization strategy when it’s used properly. We’ve written on it extensively. Plus, the Internet is full of “How We Increased Conversions by 1,000% with 1 Simple Change” style articles.

Unfortunately, there are experimentation flaws associated with A/B testing as well. Understanding those flaws and their implications is key to designing better, smarter A/B test variations.

Keep reading

When designing the landing page for CXL Institute, we conducted an experiment regarding our explainer video.

We wanted to find out how “trustworthy” and “attractive” different voices were perceived. In this CXL Institute study, we tested four different voices, which differed by gender and whether they were professional voice actors or not.

Question is, did it make a different in how people perceived our video content? Yes, and the results were somewhat surprising.

Keep reading

![Which Site Seals Create The Most Trust? [Original Research]](https://cxl.com/wp-content/uploads/2016/06/trust_seals-320x160.jpg)

![Which Type of Voice Actor Should You Use for Your Explainer Video? [Original Research]](https://cxl.com/wp-content/uploads/2016/04/Capture-320x160.jpg)