Most people stick to one end of the testing spectrum, which is where iterative testing falls. Why? Likely because it’s the most talked about, the most publicized.

However, there is another end of the spectrum to explore, which is where innovative testing falls. Understanding one without the other is a disservice to yourself, your colleagues and your boss / clients.

A solid testing program includes the full spectrum.

Table of contents

Iterative testing vs. innovative testing: which works best?

Think of iterative testing and innovative testing as near-opposites sitting on different sides of the spectrum. On one side, you have the scientific iterative testing and the more risk-driven innovative testing.

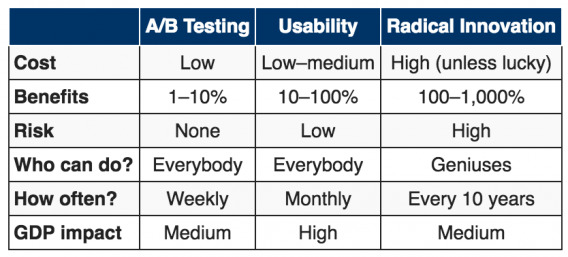

First, let’s go back to an article Jakob Nielsen of the Nielsen Norman Group wrote in 2012. In it, he defined three different approaches to better design: a/b testing, usability and radical innovation.

Note that when Jakob says “better design”, he means “more conversions / increased sales”.

Here’s how Jakob summarizes the three approaches…

It’s important to clarify a few definitions here so that we’re both on the same page…

A/B testing splits live traffic into two (or more) parts: most users see the standard design (“A”), but a small percentage sees an alternative design (“B”).

Usability refers to the full range of user-centered design (UCD) activities: user testing, field studies, parallel and iterative design, low-fidelity prototyping, competitive studies, and many other research methods.

Radical Design Innovation creates a completely new design that deviates from past designs rather than emerging from the hill-climbing methods used in more standard redesign projects.

So, what he’s really saying is that the three approaches to better design are: a/b testing, usability research and radical redesign.

Of course, Jakob suggests that there is no reason these three approaches have to be used separately…

Jakob Nielsen, Nielsen Norman Group:

“Because usability makes the most money on average, it’s the strategy that I recommend. (No surprise, if you’ve read my past articles.)

But, in truth, there’s no reason to limit yourself to a single strategy because the 3 approaches complement each other well.” (via NN/g)

When all three of these approaches are used simultaneously, it’s called innovative testing.

Iterative testing

So, then, what is iterative testing? Iterative testing is simply standard a/b testing, one of the three approaches. It’s the addition, modification or removal of one element at a time.

Examples:

- Changing the button color.

- Changing “Download Your eBook” to “Download My eBook”.

- Changing the hero image.

Paul Rouke, CEO of PRWD, explains when you should be choosing iterative testing in his Elite Camp 2015 presentation…

Paul Rouke, PRWD:

“Why choose iterative testing?

- Quick wins

- Start to learn

- Use your tool

- Limited resources

- Political business

- Build momentum

- Demonstrate ROI

- Develop a culture

You want to walk before you start running with testing.”

Innovative testing

On the other end of the spectrum, you have innovative testing. That is, the addition, modification or removal of multiple elements at once.

Examples:

- Introducing a new feature or functionality.

- Changing the navigational structure of the site.

- Changing the entire above the fold value proposition.

Paul also touches on when you should be choosing innovative testing…

Paul Rouke, PRWD:

“Why choose innovative testing?

- Make significant increases

- Really shift user behaviour

- Quantifiable evidence for a major change

- Prior to back-end system changes

- Business proposition evaluation

- Reached local maximum

- Value proposition learning

- Simplify a stepped process

- Data drive website redesign

You want to grow your business rather than just optimize your website performance.”

Why it’s risky

Since you’re changing multiple elements of the site at once, you won’t know if the increase or decrease is the result of change A or change B (or change C, D, E).

Marie Polli, a senior conversion strategist at CXL, explains it well…

Marie Polli, CXL:

“So, for example, if you test a new functionality on a page, you will never know if it fails, whether it was because people didn’t like the design you created for it or because they didn’t like the functionality. It’s a more bold change and it could bring a higher benefit, but it could also influence your revenue in a negative direction. So, you need to be careful.”

With iterative testing, you know that conversions increased X percent because you did exactly A. With innovative testing, you don’t have that luxury. It’s the price you have to pay.

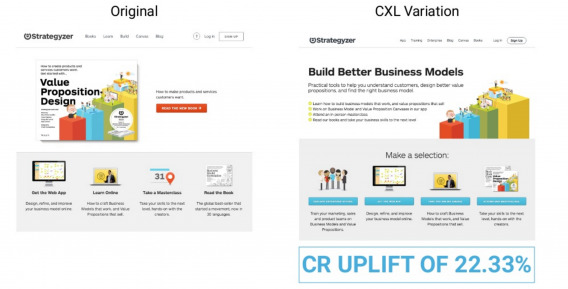

Case study: Strategyzer

At CXL Live, Marie shared a case study from Strategyzer, a CXL client. Here is the control and the variation…

You can’t tell from the image above, but the original has a slider that changes every 5 seconds. It was difficult to read, making the value proposition confusing.

Also, the four bold headlines are clickable, but it is less than obvious. Thus, no one really clicked on them.

There were multiple issues here, so we tried an innovative test. Marie and co. made the value proposition more clear and made it obvious that you need to select one of the four headlines.

The result, as you can see, speaks for itself… a 22% increase.

Why you should care about innovative testing

You should care about innovative testing for three distinct reasons: it’ll help you identify bigger opportunities and wins, it’s more conservative than a radical redesign, and it’s just more exciting.

1. You’ll uncover bigger gains

With innovative testing, you can test new business ideas, new services, new offerings, etc. None of those fall under the category of iterative testing. There’s a big difference between testing copy / button color and testing a potential addition to your business model, for example.

Once you uncover those big gains via innovative testing, you can run with them and create a snowball effect via iterative testing.

You have to take a bold risk to uncover big opportunities like that initially. They won’t just come from small, iterative tests.

2. It’s safer than radical redesign

A radical redesign or radical innovation, as Jakob calls it, can be quite dangerous. In another article, Peep makes a great point…

When was the last time that Amazon did a radical redesign? Most companies change their site every 3 years or so, but Amazon’s current look isn’t much different from its look 5 years ago. All the changes are iterative. They’re improving their layout through testing. When they change something, they *know* it works – because they have (repeatedly) tested it. Yes, different – but not radically different. You can still recognize it.

When you implement a radical redesign, you risk running into a few different issues…

- Conversions actually stay the same or decrease because some things get better and some things get worse. Often, they cancel each other out or, worse, result in fewer conversions.

- Radical redesign development is time-consuming and labor-intensive. Typically, you’ll spend more time and resources on the redesign than intended.

- The radical redesign becomes an art project instead of a science experiment. That is, it’s eventually designed by committee as you stray from data and research during numerous design iterations.

As mentioned above, innovative testing means you won’t know whether the increase or decrease is the result of change A, B, C, D or E. Radical redesigns make it even more difficult, Marie explains…

Marie Polli, CXL:

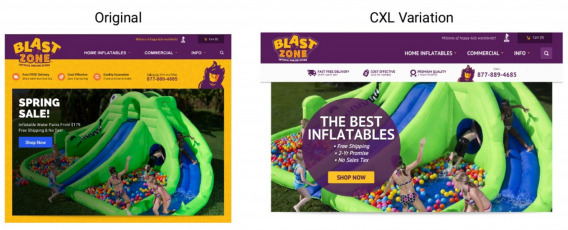

“A lot of clients come to us saying, ‘Hey, we want you to change our whole website. It’s crap. It’s not working, it’s not converting. We want you to change it and make it better.’ But you can change it also with innovative testing.

You can first improve the layout, which we did for one of our clients. It was a very messy layout, you have a lot of colors. And we made the benefits stand out, you have the ‘call us’ stand out over there. It’s much clearer… we did it site-wide. That brought along a 61% uplift… people could actually see the benefits, they weren’t lost anymore.

Then, the client also wanted to change the navigation and that brought along a 7% decrease. If we would have done it all together, then the 7% decrease would have been eaten up by the 61% uplift. So, you can do innovative testing in order to change your site radically.”

Think of an innovative test as a mini radical redesign. For example, instead of redesigning the layout and navigation in one big radical redesign, you start with redesigning the layout.

It’s not a complete overhaul, not a complete departure from the control. Thus, not as time- or labor-intensive. It’s also driven by usability testing and qualitative research vs. committee vote and designer opinion.

Still, it is quite easy for innovative tests to “cancel each other out”, resulting in no change in conversions. For example, elements of your layout redesign might be canceling each other out.

That’s the risk you have to take, but at least, it’s a smaller risk than developing a radical redesign.

3. It’s fun

Innovative testing is simply more fun. Would you rather spend your time reworking and experimenting with a navigation system or button copy? Both might be impactful, but one is certainly more exciting than the other.

Since innovative testing is more fun than iterative testing, it’s quite easy to get the whole team onboard and thinking of hypotheses, betting on outcomes, etc.

You either learn something big or get a major win. With great risk comes great reward.

When to try innovative testing

Here’s the thing… innovative testing isn’t the right choice for everyone. There is a time and a place for both innovative and iterative testing. Knowing when it’s best to use each is clutch.

As a general rule, you should use innovative testing when: an iteration is no longer enough, you’ve tested your heart out, or you have a low traffic site.

1. Iteration isn’t enough

When you don’t have the basics right, an iteration isn’t enough. For example, let’s say your value proposition is unclear. You’ll have to run a separate iterative test on the headline, hero image, etc. Or, you could run an innovative test on the entire above the fold area.

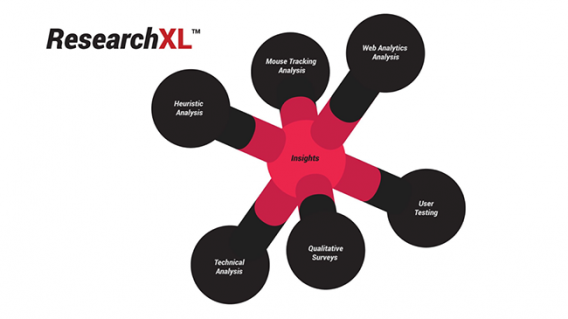

So, how do you know if you don’t have the basics right? We use the ResearchXL model…

To break that down a bit more…

- Heuristic Analysis: Identify “areas of interest” by checking key pages for relevancy, motivation and friction.

- Mouse Tracking Analysis: Analyze heat maps, click maps, scroll maps and user session video replays.

- Web Analytics Analysis: Conduct an analytics health check. Set up measurements for your KPIs and identify leaks in your funnel.

- User Testing: Identify usability and clarity issues, which are major sources of friction.

- Qualitative Surveys: Customer surveys, web traffic surveys, live chat logs and interviews can be a valuable source of information.

- Technical Analysis: Conduct cross-browser testing, cross-device testing and speed analysis.

Moving through that model step-by-step will tell you whether you have your basics covered. If you don’t, there’s a good chance innovative testing is the smarter solution.

2. You’ve already done a lot of testing

If you’ve already done a lot of testing, you’ll inevitably start to see your iterative testing results slow down. That means you’ve hit your local maxima, which is when you reach the peak of your current design. Once you begin approaching the local maxima, you start to experience diminishing returns.

Claire Vo from Experiment Engine made an interesting comment about diminishing returns at CXL Live…

Claire Vo, Experiment Engine:

“Diminishing returns doesn’t exist. Diminishing effort does. You start to lose and decide to test elsewhere. If you continue with velocity, you can uncover wins you didn’t believe were there.”

Her talk was on high velocity testing and she argued that “diminishing returns” could be overcome through increased testing velocity. Essentially, Claire wants you to be sure you have gotten the absolute most out of your current design before moving on.

Marie suggests doing something similar when you start to experience diminishing returns…

Marie Polli, CXL:

“Innovative testing can help you get passed that. It can help you bring your website to a new level. So, first you do an innovative test and then you start iterating it. You have something new on your website, it works better and then you’re making it even better. That will help you cross that point.”

So, once you’re sure you’ve hit that local maxima, run an innovative test and then continue with iterative testing at a high velocity.

Also, consider that when you have done a lot of testing, the number of “big wins” remaining for your current design is minimal. So, you’re looking for small uplifts (1-5%), which are nearly impossible to measure. You need to use an AB test calculator to know how much traffic you need to detect a certain uplift.

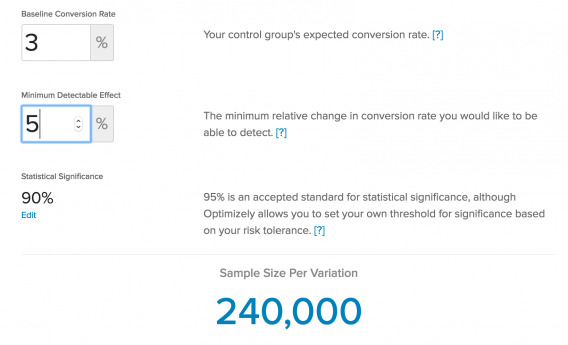

I used Optimizely’s sample size calculator to show you why…

To calculate even a 5% change in the case above, you’ll need 240,000 people to see each variation. How long will it be before the page you’re testing receives 480,000 visitors?

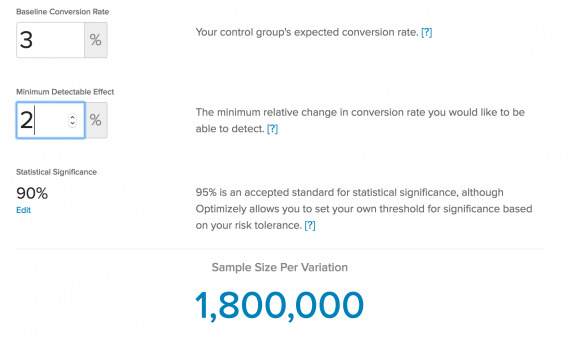

Now let’s look at a 2% change…

1,800,000 people per variation. Is your homepage or pricing page or whatever getting 3,600,000 visitors every month or so?

Clearly, you need a lot of traffic to detect those small uplifts. The smaller the uplift, the more traffic you need. Sure, maybe you get 480,000 visitors in two or three months, but that’s 8-12 weeks… a very long test.

Ton Wesseling of Testing.Agency explains why long tests can be a problem…

Ton Wesseling, Testing.Agency:

“Big impacts don’t happen too often. Most of the time, your variation is only slightly better, so you need a lot of data to be able to notice a significant winner.

But if you let your test run too long, people tend to delete their cookies… 10% in two weeks. When they return to your test, they can end up in the wrong variation. So, as the weeks pass, your sample becomes more and more polluted.”

Essentially, that pollution eats up the slight difference you were measuring in the first place.

3. You don’t have much traffic

If you really, really don’t have a lot of traffic, even 10-30% uplifts become difficult to track. In fact, Peep wrote an article called Don’t Do A/B Testing, in which he warns optimizers with low conversions (and thus, low traffic) not to waste time A/B testing.

Roughly speaking, if you have less than 1000 transactions (purchases, signups, leads etc) per month – you’re gonna be better off putting your effort in other stuff.

A lot of microbusinesses, startups and small businesses just don’t have that transaction volume (yet).

You *might be* able to run A/B tests with just 500 transactions per month too (read:how many conversions do I need?), but you need bigger impacts per successful experiment to improve the validity of those tests.

Innovative testing means bigger uplifts (30% and over), which makes it more accessible for optimizers without a lot of traffic.

How to get started

Before you run an innovative test, be sure to ask yourself the following four questions…

- Is the test based on a data-driven hypothesis? You still need to do the research, you still need to be data-driven. Don’t pull an idea out of your head right now and call it innovative testing.

- Does the test design exactly match the hypothesis? An innovative test isn’t art. Don’t let designers and your other colleagues get carried away. Stay focused on the hypothesis.

- Has the test been setup correctly with all goals attached? Put in the work to ensure your test has been setup properly in your testing tool and your Google Analytics setup is flawless. If you skip this, you’ll miss out on segmentation opportunities later, which means fewer insights.

- Did you perform a proper quality assurance check? With innovative testing, multiple page elements have been changed, which means they could easily break. That’s why it’s important to conduct a full and proper quality assurance (QA) check. The more high value the page (e.g. checkout), the more important QA is.

Whatever you do, continue conducting qualitative research after the innovative test goes live. It’s the best way to spot issues and identify something that has gone wrong. You can compare the new data you collect to the pre-test data you collected to see how your test impacted your audience.

Conclusion

Mark Zuckerberg once said, “The biggest risk is not taking any risks. In a world that is changing really quickly, the only strategy that is guaranteed to fail is not taking risks.” [Tweet It!]

Should you take the risk of innovative testing every time? No, of course not. Innovative testing is not better than iterative testing (and vice versa); it’s all situational.

But you should be aware of the possibility (and open to it) in case you find yourself in a situation where it is required. For example…

- You’ve been through the ResearchXL model and realize you have very few of the basics covered… an iteration isn’t enough to fix the issue.

- You’ve done a lot of testing and hit your local maxima. All that’s left to gain from your current design is small lifts (1-5%).

- You don’t have a lot of traffic and thus, you don’t have a lot of conversions.