A good conversationalist knows that asking closed-ended questions is no way to make real friends.

Similarly, in marketing research, there are good survey questions, and there are bad ones.

There’s a lot of value in asking both open and closed questions in a survey. This article, however, dives into the intricacies of asking and acting upon open-ended questions in your research.

Table of contents

Open-ended vs. closed questions in survey design

A closed-ended question is a question for which the answers are limited to a set of structured confines. This could be “yes” or “no,” “true” or “false,” or scale-based questions. But what’s common to all closed-ended questions is a set limit on the number of answers you’ll receive.

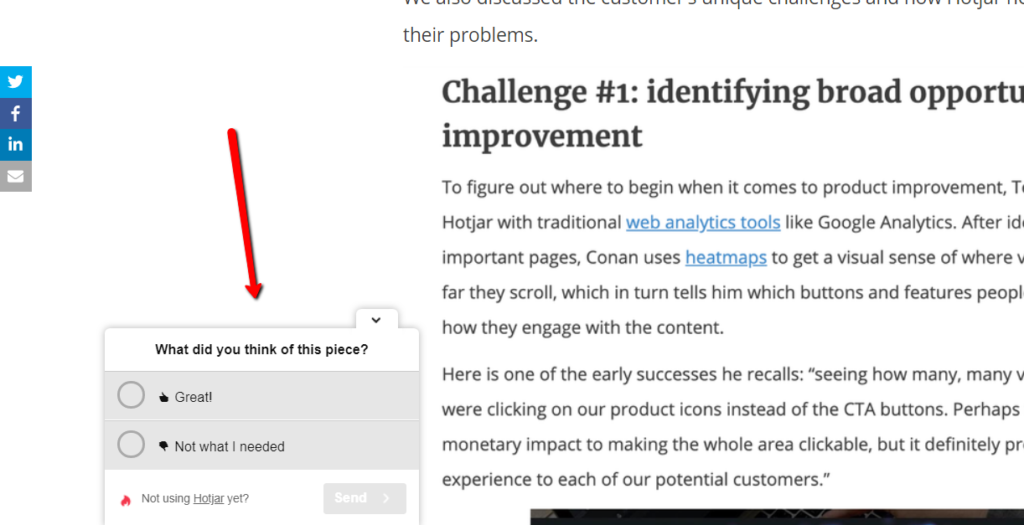

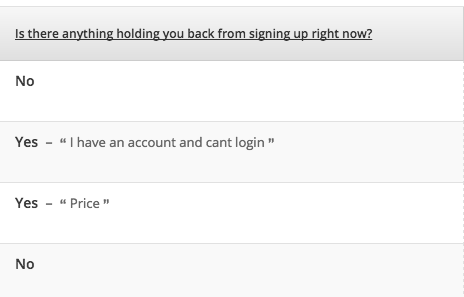

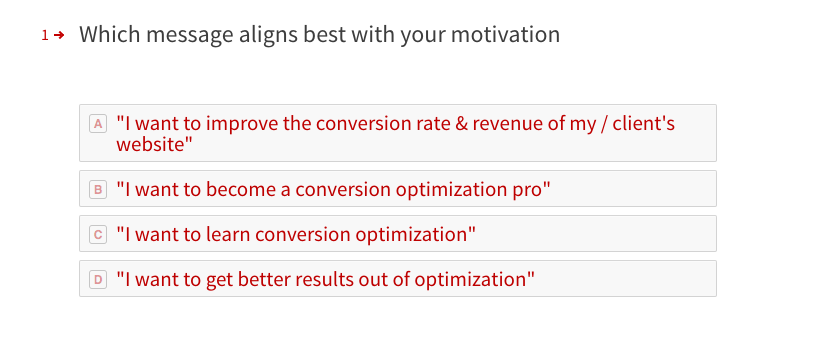

Here’s an example of a closed-ended question in the context of website research:

An open-ended question is the opposite of a closed-ended question, designed to encourage full and elaborate responses that are entirely free of restraint. They’re great for eliciting deeper connections, emotions, and insights you may not have thought of prior to designing the survey.

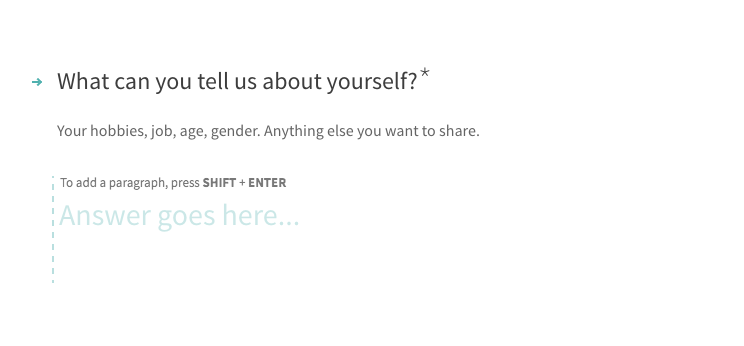

Here’s an example of an open-ended conversion research question:

While any survey question elicits attitudinal responses, closed-ended questions result in quantitative data; open-ended questions are inherently qualitative. You can codify and quantify them later, but in their raw form, they are qualitative.

It’s often the case that closed-ended and open-ended questions are used in conjunction. It’s easier to answer a Yes/No question, so if you lead with that, you’ll often get greater participation in the follow-up qualitative question (from what we’ve seen, anyway).

Open-Ended questions provide greater insight and connection

It’s not just in survey design and market research that you can use the power of open-ended questions for greater insight.

If you’re doing customer interviews, these questions give you the most value. If you’re doing sales, open-ended questions lead to greater connection. In client meetings, open-ended questions facilitate greater communication and understanding.

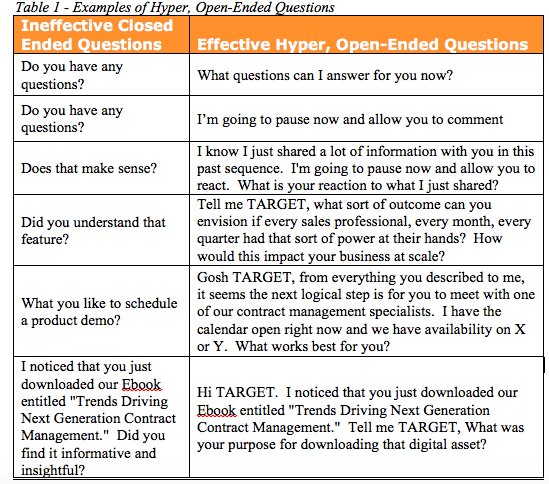

Sales Hacker put out an article about transforming boring closed-ended questions into what they call “hyper open-ended questions”:

While some are a bit cringworthy on paper (“Gosh TARGET…”), I’m sure they spur a better conversation than closed-ended ones.

Open-ended questions in customer and client interviews

HubSpot wrote an article on open-ended questions and gave this short list of example questions to ask (specifically in regard to in-person client meetings):

- What are the top priorities in your business at the moment?

- What are some of the best decisions you’ve made related to ____________?

- How are you feeling about your current situation related to _____________?

- If we were meeting 5 (10, 20) years from today, what must happen for you to feel good about your situation related to ___________?

- What opportunities do you see on your horizon?

- What challenges do you see in making this happen?

- If we were to work together on this, what are the top two or three outcomes you’d like to see?

- How will you be measuring our success related to these outcomes?

- What’s the biggest risk for you to not make progress on this situation?

In regard to customer interviews, UserVoice gave these 7 examples, useful for any product manager:

- What do you think of this product?

- What is the one thing I should do to make things better for you?

- What should we stop doing?

- Can you give me an example?

- Why and Why Not? (always helpful for elaboration)

- What annoys you about this product the most?

- How does or doesn’t this product solve problem X for you?

These examples are specific to the situation/individual/problem, but they all have this in common: they’re designed to elicit meaty, valuable responses.

Transitioning from the customer interview questions, which are useful for a product manager developing and validating a product with customers, I’ll go over some specific use cases for user experience, CRO, and digital marketing.

Open-ended questions for conversion optimization research

We can learn a lot with surveys for UX and CRO. You can:

- Uncover UX issues.

- Locate process bottlenecks.

- Understand root causes of abandonment.

- Distinguish visitor segments whose different motivations for similar on-site activity go undetected in analytics.

- Identify demand for new products or improvements to existing products.

- Figure out who the customer is, feeding into accurate customer personas.

- Decipher intent. What are they trying to achieve? How can we help them do that?

- Find out how they shop (comparison to competitors, which benefits they seek, what words they use, etc.).

Similarly, user testing and other forms of qualitative research can be super valuable for optimization. And, of course, there’s a trick to how you derive insight from whichever qualitative method you’re using.

According to Susan Farrell from NN/g, open-ended questions are especially valuable in 1-on-1 usability testing where you’re exploring questions that may not have limited answers:

Susan Farrell:

“Closed-ended questions are often good for surveys, because you get higher response rates when users don’t have to type so much. Also, answers to closed-ended questions can easily be analyzed statistically, which is what you usually want to do with survey data.

However, in one-on-one usability testing, you want to get richer data than what’s provided from simple yes/no answers. If you test with five users, it’s not interesting to report that, say, 60% of users answered “yes” to a certain question. No statistical significance, whatsoever.

If you can get users to talk in depth about a question, however, you can absolutely derive valid information from five users. Not statistical insights, but qualitative insights.”

NN/g also gave a super handy list of when you should use open-ended questions. Here are some of the methods and situations where you would do that:

- In a screening questionnaire, when recruiting participants for a usability study (for example, “How often do you shop online?”)

- While conducting design research, such as on:

- Which problems to solve;

- What kind of solution to provide;

- Who to design for.

- For exploratory studies, such as:

- Qualitative usability testing;

- RITE (paper prototype) design research;

- Interviews and other field studies;

- Diary studies;

- Persona research;

- Use-case research;

- Task analysis.

- During the initial development of a closed-ended survey instrument: To derive the list of response categories for a closed-ended question, you can start by asking a corresponding open-ended question of a smaller number of people.

There’s well-founded skepticism for attitudinal data and observational research. When you can access and analyze large data sets, you can bring quantitative behavioral data to the table that usually provides greater insights than simply asking people what they think.

However, when planning preliminary tests or doing early-stage customer and product development, this type of research can help you lead and prioritize product features and experiments.

How we’ve used open-ended questions for conversion research

Both open-ended questions and closed-ended questions have been a big part of the design, development, and marketing process at CXL Institute.

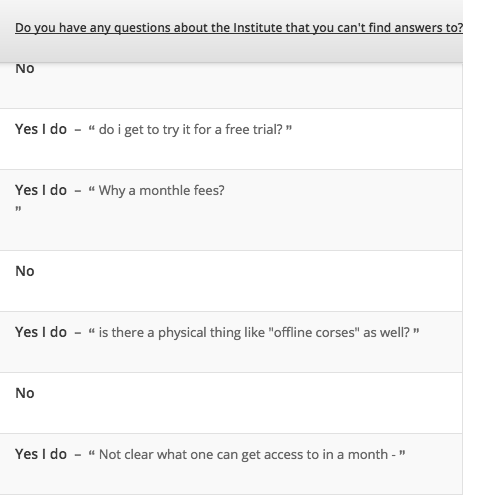

Here’s an example of an on-site poll we put on the Institute homepage. It asked a closed-ended question (“Do you have any questions?”) and then allowed the person to follow up with an open-ended response:

Here’s another example of a poll that asks a closed-ended question first and allows further elaboration on the second question:

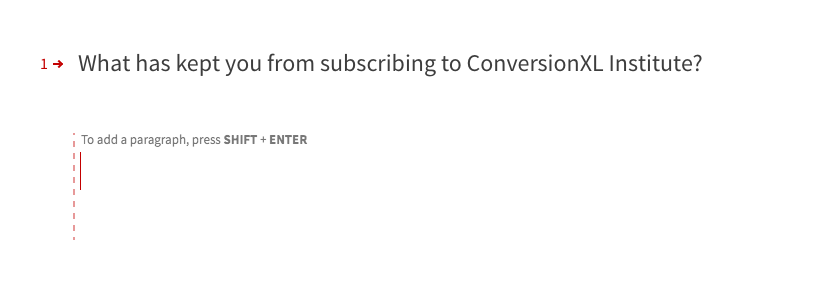

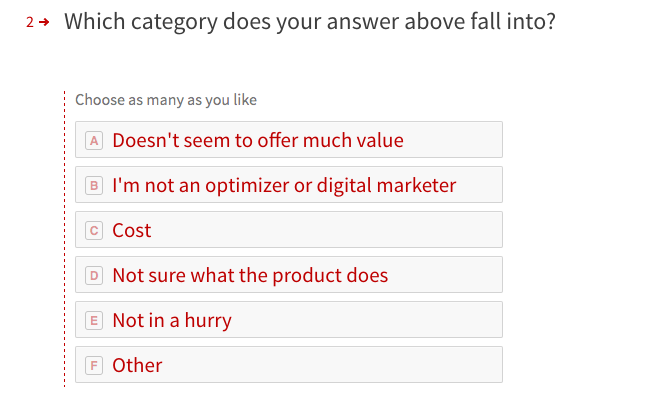

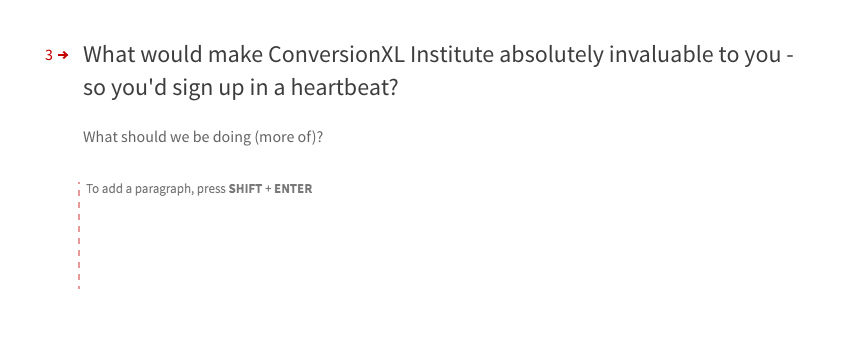

Similarly, we often run customer surveys to get more insights from our customers as well as those on our email list who haven’t purchased. Here’s an example of the latter:

We also run closed-ended questions to quantify traits that we’ve already discovered through open-ended research and exploration. We knew the following were the top motivations for the Institute (at the time), and we wanted to quantify the proportions and further analyze each segment:

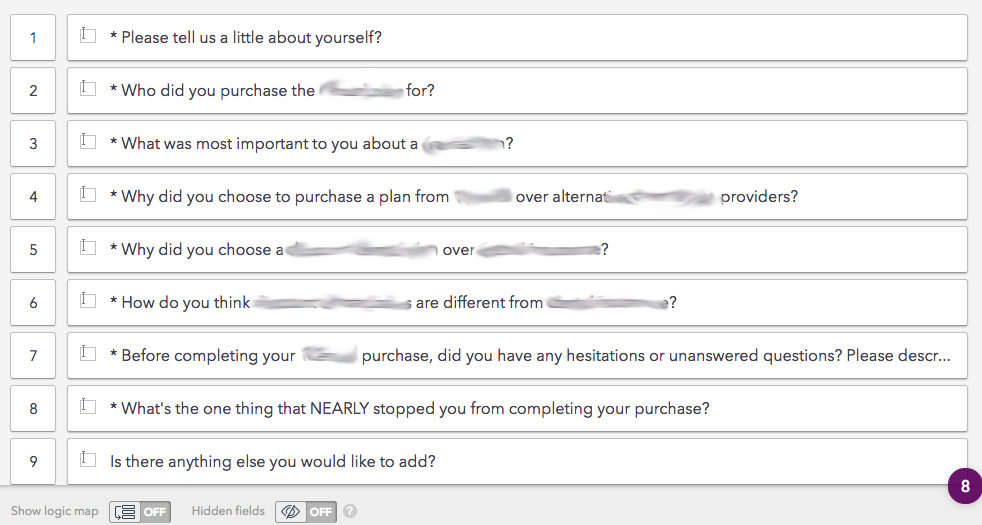

This is a big part of our conversion research when we start a client engagement as well. Here’s a sample survey we would send out to recent customers:

In general, a good strategy to use in survey design is to turn useless closed-ended questions into open-ended questions that trigger better responses. The key word here is “useless.”

If you plan on doing something with the quantitative data, such as segmenting your customers via Net Promoter Score (NPS), building user personas with scale-based questions, or tracking the user satisfaction during different stages of feature development, closed-ended questions are the way go.

But if you honestly ask yourself what you plan on doing with the data, and your answer is weak, attempt to tease out more qualitative insight via open-ended questions.

An easy way to do that, if you can’t fully reform the question, is simply to ask the person to elaborate.

“Did you find value in this process?” If so, please explain further. If not, tell us how we can improve.”

Avinash Kaushik really summed it up well in this article:

Avinash Kaushik

“Any good survey consists of most questions that respondents rate on a scale and sometimes a question or two that is open ended. This leads to a proportional amount of attention to be paid during analysis on computing Averages and Medians and Totals. The greatest nuggets of insights are in open ended questions because it is Voice of the Customer speaking directly to you (not cookies and shopper_ids but customers).

Questions such as: What task were you not able to complete today on our website? If you came to purchase but did not, why not?

Use the quantitative analysis to find pockets of “customer discontent”, but read the open ended responses to add color to the numbers. Remember your Director’s and VP’s can argue with numbers and brush them aside, but few can ignore the actual words of our customers. Deploy this weapon.”

More examples of questions to ask with on-site surveys

With a customer survey, you strategically architect your questions to reflect your specific situation. With on-site surveys, that’s true too, but the questions that work on on-site surveys are generally more applicable to a broad suite of sites and companies because they deal with anonymous traffic.

Here are some questions you can steal for your research purposes:

- What’s the purpose of your visit today? (establishes user intent)

- Why are you here today? (also established user intent)

- Were you able to find the information you were looking for? (can identify missing information on the site; best asked on product pages)

- What made you not complete the purchase today? (identifies friction; only ask this as an exit survey on checkout pages and beware that some people are still considering the purchase.)

- Is there anything holding you back from completing a purchase? Yes/No (and then ask for an explanation; again, this identifies sources of friction)

- Do you have any questions you haven’t been able to find answers to? Yes/No (identifies sources of friction, missing information on the site)

- Were you able to complete your tasks on this website today? Yes/No, and, if “No,” “Why not?” (identifies friction and missing info)

Common mistakes with open-ended questions

The most common mistake with open-ended questions—and with research and analysis in general—is the inclusion of bias (or artifacts, if you want to use the academic term).

This is when the researcher unconsciously adds a personal opinion into the scientific process or when that opinion or expectation is unintentionally communicated to the research participants.

There’s been a lot written about bias, especially on this site, so I won’t write a book about it here. But it’s important to note that bias can inject itself at any stage of the process—into the questions you ask, how you code answers, or how you take action upon the “insights.”

For example, it’s common to analyze qualitative data by coding and clustering common responses. Suppose you’re looking at three or four separate answers that, on face value appear, to have a degree of commonality:

- “I liked the logo.”

- “The logo was cool.”

- “The logo made the site.”

- “The logo stood out.”

You could lump all of those into a category called “positive comments about the logo,” but just imagine the amount of bias that could inject itself into this categorization structure.

Dr. Rob Balon:

“The most critical part of avoiding the blind spot is to recognize that even the most objective among us has one. Above all, you want to avoid the temptation to default to that bias-ridden comfort zone.

That’s the first step in conducting effective qualitative research.”

Other common mistakes are what you would think:

- Analyzing your data incorrectly (treating qualitative like quantitative data when you shouldn’t);

- Surveying the wrong people (and getting accurate but unhelpful insights);

- Ignoring business and strategy objectives and blindly asking questions;

- Asking unhelpful questions.

Most of these can be solved with a bit of rigor and some strategic foresight.

Conclusion

Closed-ended and open-ended questions both have their place in research.

Closed-ended questions allow for a quantitative approach, which is especially useful for segmenting, persona building, and time-based analysis (i.e. Are you improving?).

Open-ended questions dive deeper, teasing out the why behind the actions or closed-ended responses. They’re great for deeper insights, connection, and for learning things you may not have thought to ask.

When building surveys or conducting customer interviews, employ both strategically to get the best value from your research.

Thanks for the blog, Sure Open-ended questions with more options put a light on what actually the customer is looking for in a product or in a service.