Your new boss read that customer experience (CX) improvements can deliver billions in additional revenue. So HR hot-footed it onto LinkedIn and recruited you to make this a reality.

As if expectations like that weren’t enough, you might have heard that one in four CX employees are predicted to lose their jobs this year–if you can’t prove value, you don’t get a paycheck.

Have you bitten off more than you can chew?

Don’t panic just yet. Follow this 90-day plan to get the right things in place and start delivering results.

Table of contents

Why CX failure is all too common

People fail in CX roles for two main reasons:

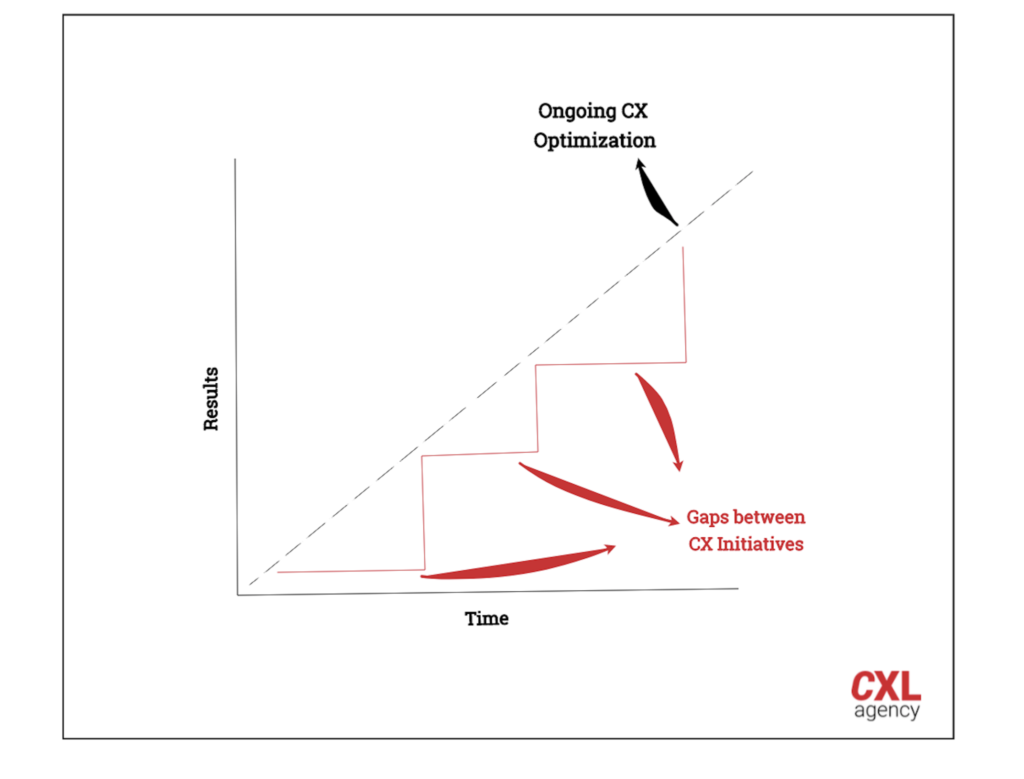

1. They plan on delivering big CX initiatives that take months to release, rather than ongoing CX improvements. Doing so misses out on the incremental revenue you could be generating, and the big initiatives that do make it to implementation have a lot riding on them.

2. They don’t measure the impact of their work in commercial terms. You do CX a disservice if you focus only on metrics like NPS. To succeed, you need to tie your improvements directly to revenue, reduced churn, lower operational costs, etc.

Goals to focus on in the first 90 days

Achieving a great customer experience that delights demanding customers (and delivers for demanding stakeholders) isn’t easy—even in the most forward-thinking organizations.

Your overall aims are four-fold:

- Get company-wide buy-in. From the CEO to the warehouse assistant, everyone understands the value of being customer-focused. Customers’ wants and needs are considered in every decision.

- Develop a multidisciplinary team. Access to a team with skills that include scientific experimentation, UX, copywriting, consumer psychology, statistics, data analysis, coding, research methodologies, change management, and design.

- Implement a process to continuously optimize your experience at scale. Operationalized research, experimentation, and learning cycles that generate commercial results.

- Gather reliable data and regular customer research to make decisions. Everyone uses data to inform decisions, rather than using it retrospectively to support decisions they’ve already made.

To get there, here’s what you should do in each 30-day increment of your first quarter on the job.

Days 0–30: Discovery

What you’ll do:

- Run a fresh-eyes review of the end-to-end customer journey.

- Meet people from all departments across the company.

- Kick off your first research sprint.

- Begin experimentation.

What you’ll achieve:

- Begin mapping out the customer journey and highlight areas of friction.

- Identify who’s a CX ally and who needs to be convinced.

- Validate the trustworthiness of your data and past insights.

- Get experiment results to share with the company.

Fresh-eyes review

Right now, you’re just like your new customers. You’re impartial to the emotional labor the design team put into redesigning the homepage and unsympathetic to the rationale that finance gives for sending buyers through a convoluted payment process.

So before you learn too much, do your own review of the end-to-end experience.

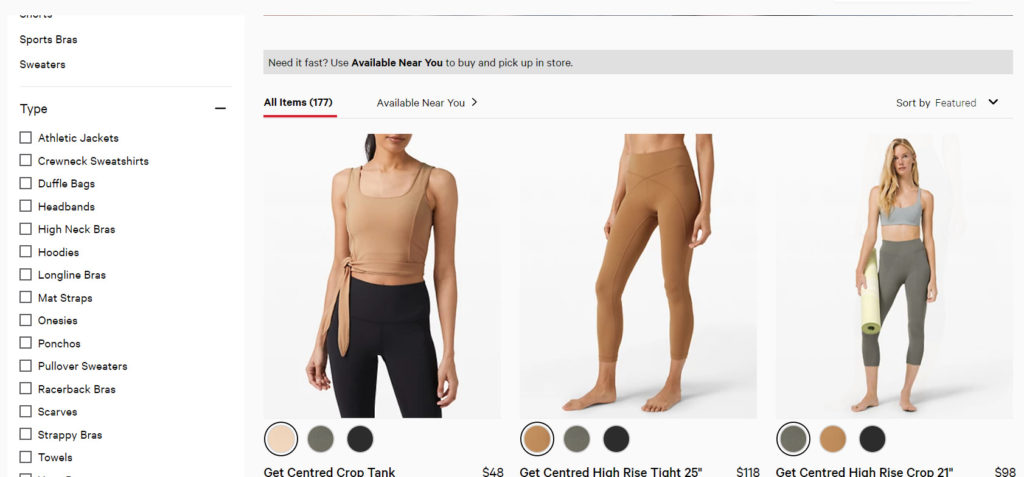

Give yourself a customer backstory. Let’s say you work for an ecommerce sportswear company. Your backstory might be that you’ve recently taken up yoga and you need to buy some yoga pants to wear to a class.

Start by researching your options on Google and follow the paths presented to you. When you move onto your own company website, explore all the options available (e.g., running searches, using filters, reading reviews, favoriting items, etc.) to help you choose which pants to buy.

Check out the social media links, sign up for the newsletter, and follow the checkout flow to make a purchase. As you move through the stages, record your thoughts, feelings, apprehensions, and any unanswered questions you have as a customer.

Record what happens next, too—the communications you receive after purchasing through to the delivery, packaging, and product itself. What happens if the yoga pants don’t fit? What’s the return experience like?

On one such walkthrough, I unearthed a big problem in a customer journey, one that no one else in the 200+ person business had realized. It was a car buying service, and I visited a branch just as our customers would.

After going through the buying process, I noticed an email address for aftersales in tiny writing at the bottom of the receipt. I emailed it but got no response. Back at the headquarters, I started to ask around—who owned this mailbox? Where did the replies go to?

No one was monitoring it. I set up the mailbox, and—to my horror—thousands of unanswered customer emails started downloading. Shortly after my discovery, the business was investigated by the office of fair trading. All those customers who got no response took their questions and complaints elsewhere.

If I hadn’t walked through the journey as a customer, I would’ve missed this, too. If only someone had done it sooner, we could’ve resolved customer issues directly and avoided investigation.

Keep your findings for later (unless you unearth a major flawed, as I did). You’re not going to win any favors if, in your first week, you start ripping apart the ecommerce team’s baby. Wait until you have additional research to make a case for improvement.

Time to shadow

It’s time to meet the stakeholders—maybe not every single person, but definitely a few people from every team. During this stage, you want to meet the people doing the main activities in each function, not the managers.

Meet them at their desk or usual place of work. This will help you get a feel for what the company culture is really like. Are new ideas given only a cursory glance? Is it all about the HiPPOs, or are decisions made based on data? Is feedback listened to?

Ask them for their ideas on what needs to improve. Dig deeper into the responses you get, following pain points, to understand their root causes.

If, for example, the retail team tells you customers are frustrated about the availability of specific products in certain stores, find out when this started. After digging further, you may discover an advertising campaign where marketing promotes these products in-store and increases demand.

You speak to the buying team, and, it turns out, they don’t have real-time stock data—so they didn’t even realize this situation was happening. Now you fully understand the problem and its causes.

Ask individuals to walk you through their daily tasks. This will differ based on what your company does, how your teams are structured, and their own processes. But, broadly speaking, the following act as crib notes for things you typically want to ask:

Sales team

- Show me how leads come into the sales team.

- Walk me through your process for outbound sales.

- What criteria do you use to score leads?

- What happens to unqualified leads?

- Can I sit in on customer demo calls/pitches/discovery calls? Record common objections or questions. What impression does the sales team give of the company?

- Can I see some of your email communications with leads? Is there consistency across the team? How frequently do we communicate with leads?

- Show me how you use the tools available to you.

- What happens to leads that are lost? What happens to leads that are won? How are new customers onboarded and handed over to other teams?

HR team

- Can you show me some recruitment ads? What message do they give about what’s important to our company?

- What criteria do you use to decide on a candidate’s suitability for a role? Do you include any soft or hard skills related to customer-centricity?

- Can I sit in an interview? What questions do you ask? What impression of our company does the HR team give?

- Observe how candidates are communicated with (i.e. frequency, timing). Are questions answered or ignored? Are email responses sent from a specific person or a no-reply email address?

- Can you walk me through the interview stages? What tasks do you ask candidates to perform?

- Is there any feedback collected from prospective candidates? Check online at places like Glassdoor, too.

- Can I go through the onboarding experience with new hires?

- What feedback do you collect on staff satisfaction? Where is the company doing well and what are the problems?

- Can you share our company policy documents?

- Show me how you use the tools available to you (e.g., Are there any reward or recognition programs? Are there any education or training systems?).

Marketing team

- What’s the overall marketing strategy and plan?

- What campaigns and initiatives do you use at different stages of the customer lifecycle (e.g., onboarding email flows, customer loyalty programs, etc.)?

- What are the key marketing messages, brand values, and visual identity? What expectations do these give to customers?

- How do you evaluate performance and make decisions on future campaigns?

- What campaigns and messages have performed exceptionally well? Which have performed poorly?

- What tools do you use and what data do you collect on customers?

- Can you share any personas you have and show me how you segment customers?

- What is the average marketing journey for a customer?

Product team

- Can you share any personas you have and show me how you segment customers?

- Is the product or service personalized in any way?

- Can I join a product stand-up and ideation session?

- Can you walk me through the product roadmap?

- How are new ideas and features decided upon, prioritized, tested, and measured?

- What data do you hold about the product usage and customer pain points?

- What method of delivery do you work with (e.g., waterfall, agile, etc.)?

Customer-facing teams

Spend a day with delivery drivers, retail staff, and social media and customer support teams.

- Observe customer interactions. How are customers greeted? What’s the experience like? How do these experiences fit into the overall journey? What are the main friction points for customers?

- What workarounds do you have to perform your job (e.g., A customer tries to return a product they purchased online in a retail outlet, but the point-of-sale system doesn’t recognize online orders, so they’ve created a manual workaround—but it means 10 minutes of asking the customer questions to process a refund.)?

- What processes are in place that cause issues? What do you wish you were allowed to do to deliver a better service?

- What tools do you use and what data is collected?

- What are the main complaints and compliments from customers?

- What collateral are you given to help you in your role (e.g., customer service scripts)?

Finance team

- What’s the process for customers being charged or invoiced?

- How do you process refunds? How long does it take? Do you speak directly to the customer?

- What payment issues do you face?

- What products or services are most profitable?

Tech/Engineering team

- What’s the roadmap for engineering (customer-facing initiatives and back office)?

- What method of delivery do you work with (e.g., waterfall, agile, etc.)?

- How are new ideas or projects decided upon, prioritized, tested, and measured?

- What’s the toolstack you use and what are the capabilities/limitations?

- What data do you have about customers?

- Has the data been validated as trustworthy?

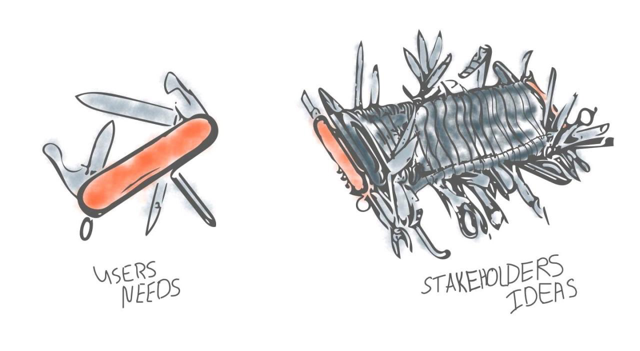

This process is likely going to trigger a few people who have a laundry list of ideas. Not all of them are things you should do, at least right away.

Try to sympathize with your colleagues’ struggles but don’t promise any solutions or fixes yet. You need input from customers to prioritize what to do. Below illustrates why.

I made this mistake myself. After hearing my finance team’s tales of woe, I regrettably offered to help them fix a manual accounting process, which turned out to be a massive IT project that had little impact on the CX.

I shouldn’t have been involved, but every Monday I’d get an email asking for an update on progress. It can be difficult to say no when you innately want to improve things for people, but you need to prioritize what you work on—not just fix things for whoever shouts the loudest.

Meet the management team

It’s time to get together with heads of departments to get answers to more strategic questions. Take them for a coffee or plan a more structured meeting to answer the following:

People and skills

- What skill sets are available within your team? What’s missing?

- Is there any training or education around CX?

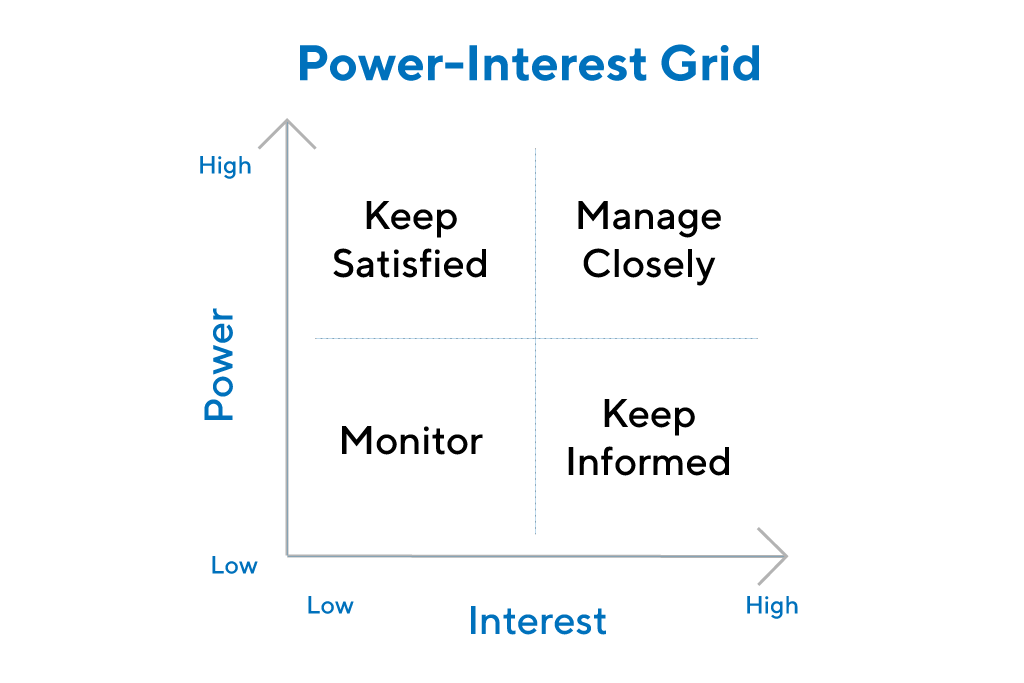

Try to identify your CX allies—and detractors you need to win over. If you want to get theoretical, map stakeholders onto a power-interest grid. (I tend to find my notes sufficient.)

Data and tools

- What tool stack does each department use? What do they need to offer a better experience?

- What data do they have to help build up a picture of your customers and their experience with the business?

- Is the data siloed in different teams/tools? How is the data accessed? Do you need SQL skills to pull reports, or are there dashboards?

- Are there any gaps in the journey where the company doesn’t have data?

- Is the data trustworthy?

- Is any ongoing customer research or qualitative feedback being recorded? (I tend to go hunting in Google Drive and project management tools to look for old research documents.)

Strategy and culture

- What’s each team’s strategy and roadmap?

- What KPIs are teams measured on? How are they rewarded?

- If it’s not already clear, you’ll need to know the overall company vision and expectations around customer experience (and what you’ll be measured on).

Process and methodology

- How is the customer experience continuously optimized? Is there an experimentation process in place to manage the risk of trying new ideas? What about quantifying uplifts for digital elements of the customer journey?

- What processes are there for developing hypotheses from research and data?

- How do teams prioritize what to do?

- How are customer learnings and insights shared with the wider business?

Once you’ve done the rounds, write up your findings, and identify gaps between where the business is and where it needs to be to:

- Deliver on goals;

- Get company-wide buy-in;

- Develop a multidisciplinary team;

- Implement a process to continuously optimize your experience at scale;

- Get reliable data and regular customer research to make decisions.

It’s a great benchmark document to show improvements from when you started.

Kick off your first research sprint

I call it a sprint because it should be an ongoing activity, not a once-a-quarter thing. From your initial investigations, you should already have an idea of the data you have in the business.

Begin by setting up any additional data points you need to collect. Think about what you need to complete your analysis of the customer journey and to create customer personas.

Check that you have accurate data

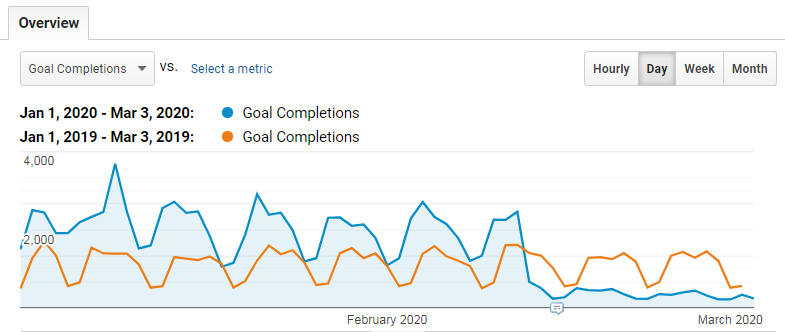

Before you can identify data discrepancies, ensure you’re comparing apples to apples. What does Google Analytics consider a sale? Does that map to a sale in the backend/accounting/CRM system?

Make sure you’re comparing the same timeframes, too. Usually, selecting a timeframe one month back is enough to avoid using data for which the setup might have been different. One month is also usually far enough back to include data that requires a manual push (but check this with your own internal business processes).

Once you’re confident in the data that already exists, begin mining it to sketch out the main “happy path” of the customer journey, highlighting the touchpoints customers engage with and any areas of friction or drop off.

Sprint planning

Plan your next couple of focused research sprints based on areas of friction. For example, a research sprint on the checkout might result in tracking and collecting new analytics data, heat maps, and customer exit surveys—data from which you can build a picture as to why customers drop off at this point.

Go beyond your happy customers. The “aware but yet to buy” as well as recently churned segments can offer up a ton of insights.

Pick your research methods depending on what you need to discover. You could use quick-and-dirty guerilla research methods (e.g., polling customers at a store) or more structured methods:

- Copy testing;

- Usability research;

- Mouse tracking and heat map analysis;

- Card sorting and tree testing;

- Surveys;

- Interviews;

- Moderated research sessions;

- Social listening.

Experimentation launch

Aim to start experimenting in your first 30 days. If you’ve just implemented a new testing tool, your first test should be an A/A test to check that everything is working correctly.

If you already have a working A/B testing tool, you can start A/B testing. While it’s nice to get results early on, this very first test is to get the machinery started and to iron out any kinks in your process that might differ from how the team worked previously.

Pick something simple to test. Simple to test means:

- Something that doesn’t require any special targeting or audience segmentation.

- Something that doesn’t require additional dev or design work but can be created in the A/B testing tool.

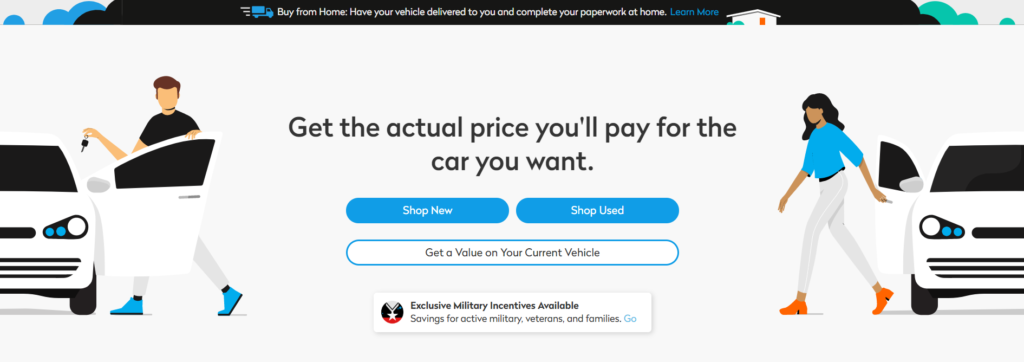

Value proposition copy tests are a good candidate here.

Days 31–60: Planning

What you’ll do:

- Create a CX strategy.

- Create personas.

- Prioritize ideas to test.

- Run ideation sessions.

- Continue experimenting.

- Conduct a research sprint.

- Build your CX Roadmap.

What you’ll achieve:

- Identify the skills needed to build out your team.

- Implement working practices for CX and experimentation.

- Share what you’ve planned and why with the wider business.

- Share customer research insights and experiment results internally.

Create a CX strategy

The early days are not for sitting around, thinking up ideas of what might work, and creating a massive document setting out three years’ worth of CX initiatives.

You should still set out the hires you need to make and any operational team changes (e.g., cross-functional teams) or working practices (e.g., agile vs. waterfall) you plan to implement, alongside how you’ll educate and increase the focus on CX throughout the business.

Your strategy to improve CX will be the same no matter where you are because it’s a tried-and-tested cycle: conduct research > create hypothesis > validate ideas > share learnings. At this point, you can’t say what changes or things you’ll implement because it depends on how they perform in tests.

Here’s why.

Say you identify that customers really hate how long delivery takes. So, it seems like a no-brainer to tackle this, and you pop it into your strategy. Three months later, it’s time to work with your operations team to create new processes and systems to allow you to offer same-day delivery instead of seven days.

There will likely be a few months of planning and scoping, setting up RFPs for new suppliers, integrations, and testing of new software. So, all in all, maybe 6–12 months before you get the new delivery option into the hands of your customers.

Only then might you discover that it doesn’t impact conversion rates. Customers don’t want to pay for your new delivery option and stick to the free seven-day alternative. You committed to a strategy without first validating the idea.

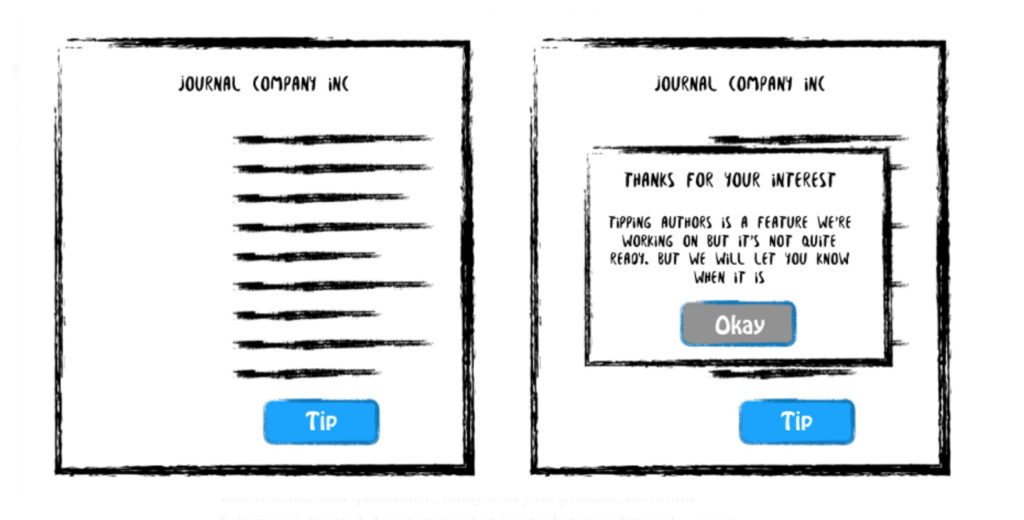

In this example, you could have run a painted door experiment—using an A/B testing tool to offer same-day delivery and recording the interest. You could even fulfill the orders manually for the test to validate the idea through to conversion. You would find out the impact it would have on customers and the business in a few weeks with little to no actual investment.

(Image source)

If a test is successful, that’s when it goes into the roadmap for delivery—after you know full well the impact it will have. This approach may even help get it done more quickly. The business will want to realize the revenue uplift as soon as possible.

Don’t agree to deliver big, expensive CX projects until you have evidence that they’ll work.

Prioritize ideas to test

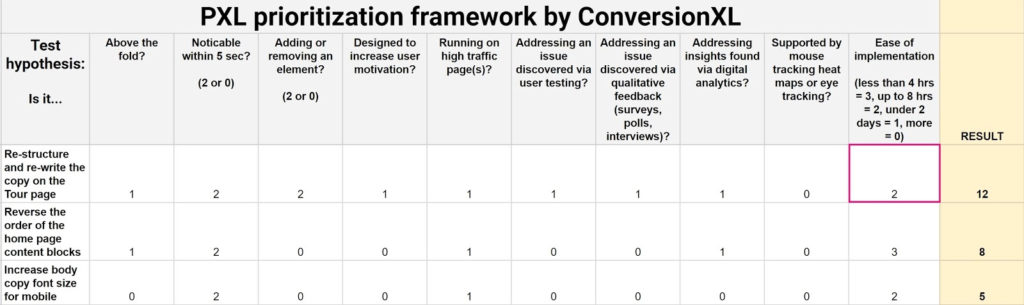

By now, you should have a list of hypotheses from your work in Month 1. It’s time to start prioritizing the list. There are many methods to help with prioritization, such as the PIE framework or ICE score, which we’ve written about previously. But, for me, triangulating the research findings to add weight to hypotheses alongside an effort rating is the way to go.

I’m not a fan of methods that require you to try to predict the impact of the test as a way of weighting them. This is almost always too hard to do accurately.

I am a fan of using the location of the experiment—the traffic it receives and its prominence on a page—to weight ideas. Using these factors allows you to rate the potential impact in a more objective way. You can use our PXL template to do just that.

Some ideas will be JDIs (Just Do Its), or things that don’t need to be tested but just need to be fixed (e.g., broken form validation on the website). These can be added to your roadmap for the developers to work on while you kick off your first few tests.

Ideation sessions

Get your whole CX team involved in ideation sessions—not just the UX designers but the analysts and developers, too. This helps the whole team understand the customer better and creates more innovative solutions.

The general flow of the session is as follows:

- Present the research-backed problem and hypothesis.

- Pin your data-informed customer personas and customer journey to the wall.

- Hand out paper and pencils to the team and get them to sketch out as many potential solutions to test your hypothesis.

- Ask each person to present their best idea.

- Have another 10-minute sketching session to iterate on the ideas presented.

- Collect the final ideas to use in your test variations. This hypothesis is ready to go into your CX roadmap and be tested.

Build your CX Roadmap

Take your prioritized list of hypotheses and work with your experimentation colleagues to understand the sample size and amount of time each test needs to run. You can use our sample size calculator to get the answers.

You’ll also need to consider spacing out tests so your customers don’t get bucketed into multiple test variations, which would mess up your results.

Day 61–90: Scaling out

What you’ll do:

- Scale up research and experimentation.

- Align team goals and metrics with CX behaviors and outcomes.

- Create a company-wide education program.

- Report the results of your first 90 days.

What you’ll achieve:

- Increased velocity of research and experimentation to guide decisions on larger CX initiatives.

- Develop the skills and knowledge to be CX-focused across the company.

- Incentivise a company-wide CX focus.

- Get customer research insights and experiment results to share with the company.

- Gain support from your executive team by sharing the results of your CX work.

Scale up research and experiments

As your processes become slicker, you can scale up the number of experiments and research sprints. Your approach depends on the organization, but you might be able to embed these practices within other teams or functional areas (so they, too, can run experiments).

You’ll also have multiple test results by now, the outcomes of which will help you validate larger CX initiatives or lead to inconclusive results (freeing you to test different hypotheses). These outcomes will help you fill in future activities in your roadmap.

Education program launch

After spending two months in the business, you should be developing a good idea of the culture and attitudes towards CX, and the data-driven approach to decision making. How much of a priority education is depends on where you’re working.

But if you don’t have buy-in, it doesn’t make sense to do the other work without improving this, too. Education and communication are your two tools to achieve this.

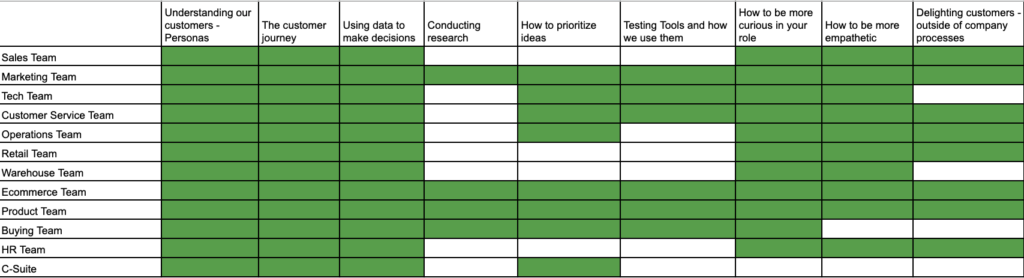

When it comes to designing an education program, adapt it to the different levels and skills that teams actually need. There’s no point putting the CFO in an SQL course, but a monthly presentation on how to interpret data and share the ROI from your activities would be perfect.

I use Excel to map out all of the teams along the y-axis, with all of the skills (soft and hard) that anyone might need to learn across the x-axis.

Fill in the matrix of who needs to learn what. Now, you can design the content or seek external resources to meet your requirements. Check out the CXL institute for how we structure training courses around experimentation and The Phillips Measurement Model for guidance on how to measure the impact of your program.

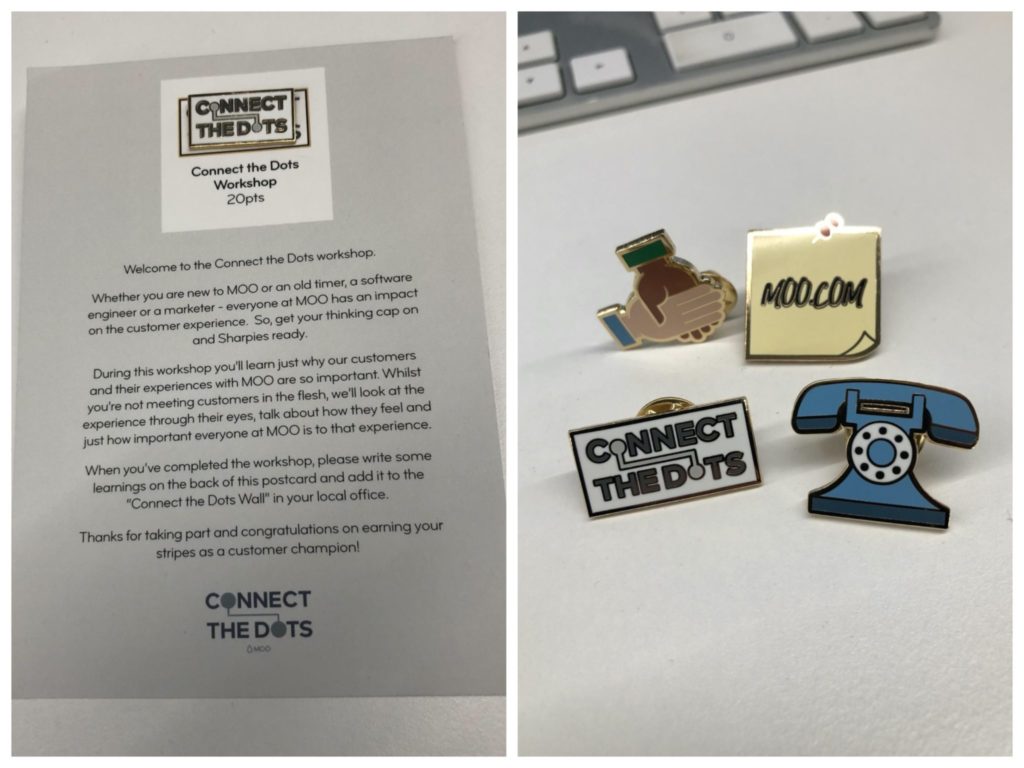

Standard elearning courses do little to stoke the fires of CX advocacy. If you can, incentivize your teams to learn. Branding your education program can do wonders for engagement. Dan Moross, Director of Customer Experience at Moo, took inspiration from a cult classic:

I had this idea from the film Office Space where Jennifer Aniston reluctantly works at a TGI Fridays–type restaurant and has to collect badges or “Flair” to pin on her sash. We thought it would be fun to do something similar.

I created an initiative called “Connect the Dots.” There were nine elements. Each one was a different activity or a task or a workshop. And for each one, you’d get a postcard and a pin badge, which you could pin to your office chair as “chair flair.”

When it comes to incentives, you can reward individuals for taking part in the training, but, more crucially, you can incentivize them to display the behaviors or skills they’ve learned in their daily work.

Get together with team heads and HR to develop metrics that measure behaviors or outcomes. Bonus points if you can make these metrics transparent across the company. You can use OKR tools such as 7Geese to support this initiative.

Communications

Model the behaviors you want to see in the world (or at least your place of work). When you make decisions, always explain the data that they’re based on. When your team makes decisions, always ask them to present the data they used to make them.

They, in turn, will start displaying these behaviors with others. You know you’ve made it when you develop a company-wide catchphrase. Chang Chen, Head of Growth and Marketing at Otter.ai, explains how she helps others use customers insights throughout the company:

We record, transcribe, and summarize all our customer interviews, and share every single one with all our teams. We also share customers’ feedback from social and support channels.

It’s different when someone internally says “customers want this” or “customers work in this way” because now we all hear directly from the customers in their own words.

Report on results

Your final task is to create a briefing document for the C-suite—no more than a few slides. Share the revenue impact of your approach, and frame results in the context of your CX strategy (i.e. conduct research > create hypothesis > validate ideas > share learnings).

Model your process for decision making—you don’t want to appear as though your ideas just-so-happened to get the results you wanted. You followed a systematic approach based on data, prioritization, and statistically tested ideas, leading to a higher probability of success.

Tease them with what’s coming up next on your roadmap, and show them any larger CX projects that have been validated, alongside the annualized results they’ll generate once implemented.

Your final slide should reiterate what you need from them to continue producing results (e.g., more top-down support, additional resources).

Conclusion

It’s going to be a busy 90 days. But the above plan will help you set in motion the four areas you need to be successful in your role:

- Company-wide buy-in;

- Multidisciplinary team;

- Continuously optimizing your CX;

- Data-backed decisions that drive revenue.

Too many CX pros work on large initiatives rather than continuously improving their CX. They struggle to tie the impact of their work to results.

Instead, this approach means that you’ll be the C-suite employee of the month. Maybe you’ll even get some chair flair.