If you read this blog regularly, you probably don’t need an introduction to CRO or A/B testing. You know the major players, best practices, and you’ve likely tested your fair share of ideas.

But, as an expert, you likely know some of the persistent frustrations with current approaches. To name just a pair:

- Testing simply takes time.

- Our best instincts are often wrong.

In fact, only one in seven tests are actually successful.

But new advances in artificial intelligence might help.

Quickly, before we start, it’s worth saying a word about what we mean when we say “artificial intelligence.” After all, the term’s a bit nebulous and different people mean different things when they talk about us.

For us, AI means an intelligent system that makes autonomous decisions. AI learns and affects the world around it.

For CRO, that means an AI that learns from user interactions and makes decisions about what to test next. It understands behavior and has the ability to prune bad ideas out while lifting up good ones. And though there are many forms in AI, in this post we’ll dive into the specific one we built Sentient Ascend with: evolutionary algorithms.

Table of contents

A Primer on Evolutionary Algorithms

So, what are evolutionary algorithms?

Simply put, evolutionary algorithms and more broadly the field of evolutionary computation are a type of artificial intelligence that mimics Darwinian natural selection.

The algorithms evolve like organisms towards more ideal solutions, and their evolution works just like it does in the biological world: algorithms that are better adapted to solve a problem get to breed and produce better and better generations as time goes on, while worse algorithms are effectively removed from the population.

Algorithms are composed of individual “genes” (individual rules or code fragments), and good genes propagate over successive generations of algorithms, and non-performing genes, like non-performing algorithms, get washed out.

Fitness

Key to the evolution of algorithms and the selection of good genes is a notion of fitness.

Fitness is that characteristic that makes one species (or algorithm) better than another. In nature, this is usually defined as reaching the age of reproduction and successfully reproducing. But fitness can be anything — making money, a drug design that solves a medical problem — it’s whatever your goal is.

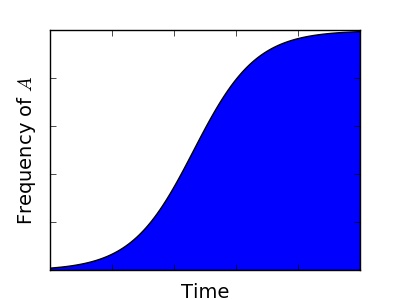

For example take a look at this graph. It shows the increase in frequency over time of genotype A, which has a 1% greater relative fitness than the genotype B:

It’s important to note that the genes that make up the algorithms, and the fitness metric are created by the practitioner. These aren’t rogue algorithms taking whatever data they want from the internet and applying it haphazardly. Rather, they have discrete goals and ingredients.

Example from the World of Videogames

Let’s look at a simple but instructive example of these concepts in a program called MarI/O. MarI/O is an AI that tries to beat a level in a Mario Brothers game.

The discrete goal, of course, is to complete the level. That means that the further the AI gets in the level, the more “fit” or successful it is.

The decisions it makes are based on the actions a player could take in the game, namely, pressing a button to run or jump or move a certain direction. If Mario dies in three seconds? That’s not a particularly good algorithm. If he makes it further than the last generation? Then you breed that new algorithm with other successful strategies, pruning the ones that do worse and breeding the ones that get a bit further. You do this enough times and MarI/O beats the level.

And, spoiler alert, it actually does.

Product Design via Evolutionary Algorithms

Back in 2002, The Seattle Times wrote an article about a young company called Affinova that “has figured out how to harness the power of natural selection,” for product evolution.

In the article, they spoke about the opportunities Crayola was going to pursue with the technology:

“The whole process seems like a mixture of Gregor Mendel and Dr. Frankenstein. Crayola will cross a population of top-selling felt-tip markers with a population of pens that have new genes that is, new ideas dreamed up by Crayola’s designers. These new pens might have a different kind of cap or different taper in the barrel.

The genetically modified pens will be evaluated by Crayola’s customers and segregated into two groups those customers like, and those they don’t.

The pens nobody likes will be sacrificed, but pens that are loved will be allowed to breed and have children of their own. The whole process then will be repeated.”

They’ve come a long way since 2002 and have since been acquired by Nielsen:

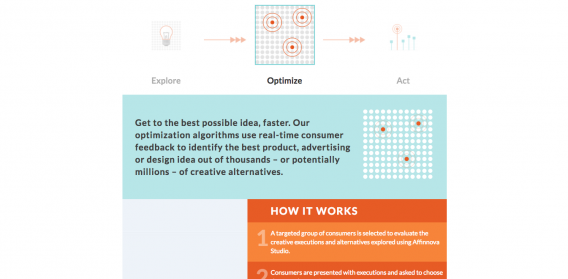

Of course, this evolution used by Affinova used attitudinal data to make decisions. This has interesting applications in advertising, product design, and formative research for new features and creative.

But let’s see how similar algorithms can be used in experimentation, using actions to create decisions…

How AI Works in Experimentation

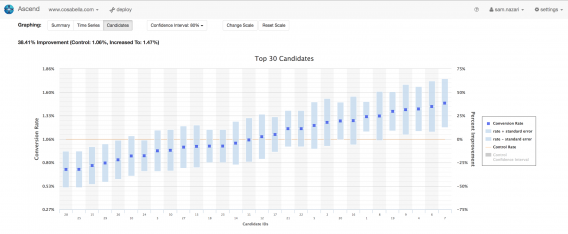

Our program Sentient Ascend uses similar concepts, but instead of applying them to beat a troubling level of a Nintendo game, Ascend helps websites convert better.

First, let’s start with a fitness measure (or what constitutes success). Without that, the algorithms won’t have a purpose, after all. Since we’re talking CRO here, our success is measured in increased conversions, be that sales leads, purchases, form submits, you name it. That’s the easy part. Now let’s get into how genetic algorithms can test tons of ideas at once and arrive at a better converting website.

Imagine all the ideas you have for your site. For most of us, we’re stuck testing one idea vs. another (hence A/B testing). With genetic algorithms, it’s different. You can actually input all your ideas at once and let your site evolve based on the genes you give it.

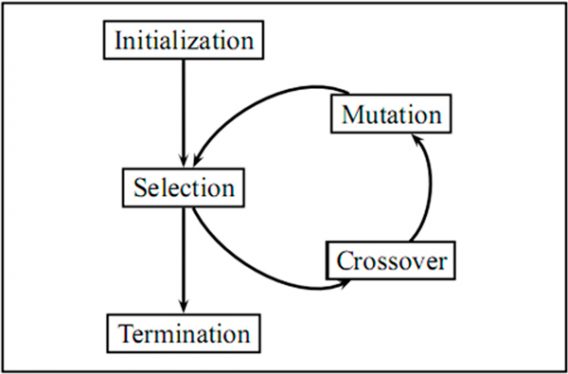

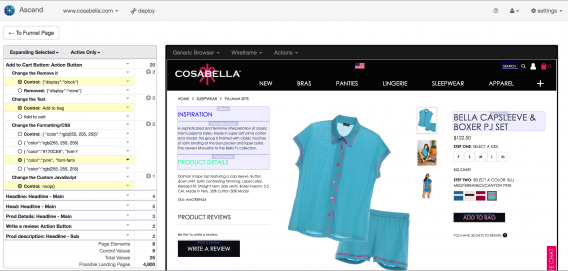

Okay. So Ascend works like this: first, you enter in each of the ideas you have into Ascend–that means headline copy, button color, removing elements, new images, etc.–and then, Ascend starts testing each change individually. That means if you wanted to try three new headlines, the program tests each against the conversion goal you’ve selected. If you wanted to test four new CTAs, it tests those individually too. Same for CTA location, images, you name it. After that first round of initial testing, Ascend has noticed which have good genes, namely, which perform as well or better than your control version.

Here’s where it gets interesting. Next, Ascend starts breeding those combinations together. Meaning, it will display a winning headline and a promising image together, then evaluate how well they do together, then test all the combinations of variables that outperformed your control site. It then selects the winners from that population and continues breeding winners with other winners.

But there’s something else important that happens here too and that’s mutation.

Some of the ideas you inputted originally might have failed only because they weren’t paired with the right variables.

For example, a certain headline might not work unless it’s shown in tandem with a certain hero image. So rather than forever ignore the ideas that didn’t work originally, Ascend “mutates” your website by adding those variables back in to ensure that they are in fact lower performers. This breeding, recombination and mutation continues for generations until Ascend converges on a winning candidate. At that point, it’s best practice to run a test where you compare that candidate–made of a specific combination of variables–against your control to solidly verify those findings.

Case Study: Classic Car Liquidators

Ascend excels in situations where there are a lot of variables to test. After all, just like evolution, more options means more possibilities for success.

A great example of this is a seven week test Classic Car Liquidators (CCL) did on their site. CCL sells, unsurprisingly, classic cars but also runs an affiliate program with eBay. When shoppers can’t find their dream car on CCL, the idea is that they follow the eBay affiliate link and CCL gets a cut. They started with this:

But only .88% of users clicked on the promotion. They used Ascend to optimize the banner, testing 61 versions of 9 different promotional elements. All told, that’s nearly 29,000 possible combinations. They ran their test for seven weeks and ended up with this:

The uptick? A 434% increase in CTR.

The reality is simply that an A/B test couldn’t find this exact design in under two months. There were simply too many variables being tested and, notably, with evolutionary algorithms, you find the best combination of ideas. You’ll note that very little about the control is found in the improved one. That said:

No Silver Bullet: Things to Keep in Mind

While testing a ton of ideas at once can save you tons of time, it takes some effort up front. Basically, when you compress a year or two of testing in a month, you’re also compressing the setup time too. That means the QA, coding, approvals, and ideation for more ideas are done at once. In particular, if you’re trying ambitious tests–like multipage design or layout ideas–the QA does certainly take a bit of time.

Conversely, there can a downside if you don’t test enough. Basically, you’re not leveraging the power of evolution if you’re only testing a couple ideas.

The freedom that evolution gives people sometimes incentivizes them to try, well, worse ideas. Instead of culling out a few bad headlines, some folks will really just try everything instead of concentrating on what they do now: generating quality hypotheses.

Creative still matters. Ascend just tries combinations of those changes as it searches for the best performers, but it isn’t going to write new copy or do anything you don’t expressly tell it to. It evolves with the genes you give it.

As for segmentation, yes you can segment audiences and test against any specific one. In addition, through integration with analytics, you can look at how different segments react to various design candidates even if you didn’t optimize for a particular segment.

Conclusion

In the coming decade, artificial intelligence will be everywhere.

It already drives our search engines, social media, and recommendation algorithms. Personal assistants and chatbots are making massive and tangible progress. The list goes on and on. And AI is coming to conversion rate optimization, too.

That said, it’s nothing to be worried about. So much of the press around AI is Chicken Little-esque, that AI is coming for our jobs, that we should be afraid, very afraid. But AI isn’t coming for our jobs. It’s coming to make us better at our jobs. Not to replace CRO professionals, but to give them the ability to try more and be more successful.