After about 2.5 years, today is my last day working on growth and content at CXL.

It’s been nothing but an awesome experience – I’ve been able to work on impactful projects, learn a ton about optimization and digital marketing, and work alongside the brightest minds in CRO.

To celebrate my experience and to pass on some of my learnings, I’ll share the top 7 lesson I’ve learned while working here.

1. Process is Everything

We talk a lot about process at CXL. As Peep is prone to say, “amateurs focus on tactics while the pros follow processes.“

Systems thinking runs through CXL and we’re taught to focus, not only shiny tactics or popular trends, but a robust process that consistently produces results over time. What we’re trying to do is maximize resources and outputs by focusing on the inputs that we can control.

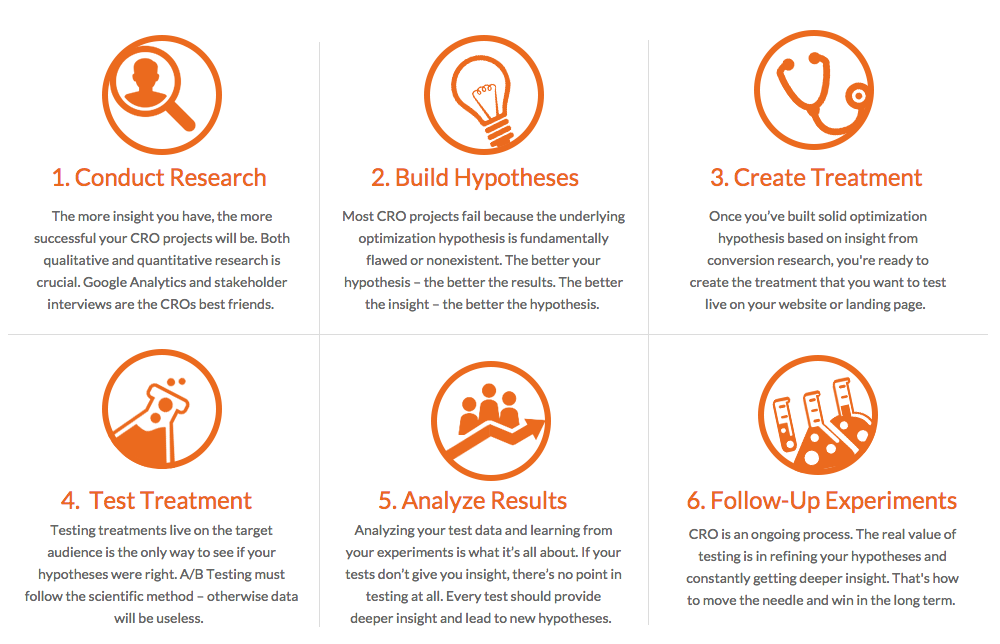

For conversion optimization, we have a process that looks something like this one:

In addition, we have processes for conversion optimization research, quality assurance, and prioritization as well. These help maximize the outputs of the program in its entirety instead of over-focusing on a single experiment. They also help us optimize our own processes, because we can measure the costs and returns at each step of the journey.

Because we have a rigorous and structured process, we’re able to constantly tweak and optimize it as well, as long as we’re focusing on the levers or points of impact:

- Test velocity.

- Test win rate.

- Average win per test.

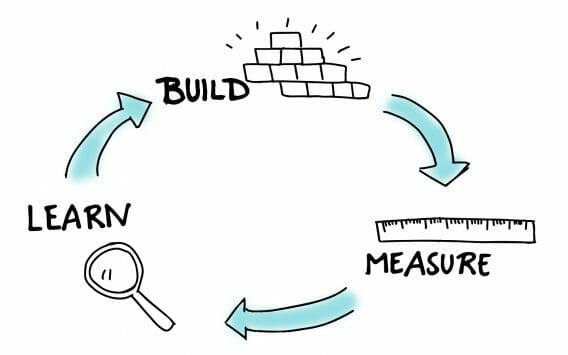

Without going too deeply into the specifics of a growth process, I should also note that you come up with similar looking processes in areas outside of conversion optimization, like content marketing and growth. Usually, the process boils down to something like the “Build → Measure → Learn” framework popularized by The Lean Startup…

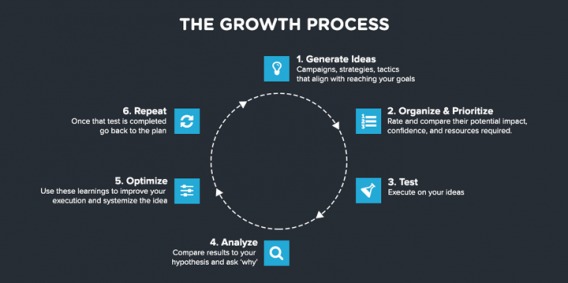

Take a look at some other models to see what I mean. Here’s one from Ryan Gum:

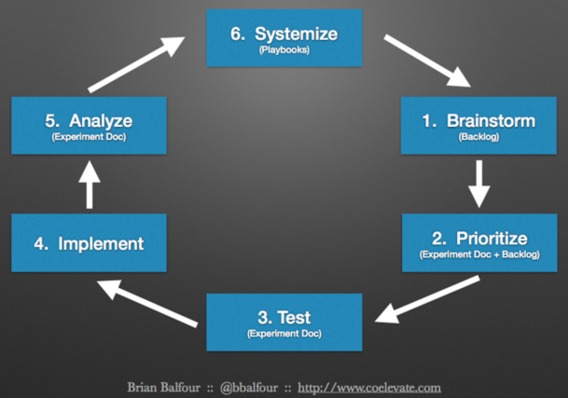

And here’s another from Brian Balfour:

Having a process for growth, content, optimization, whatever, helps us avoid “tactical hell,” where we fall prey to shiny distractions, new trends, and the fallacy of “good ideas.” What’s the value of one A/B test victory if we’re operating in a culture that doesn’t value the experimentation process?

Similarly, you can’t view conversion optimization as an isolated discipline. Rather, you have to view it in relation to the rest of the organizational system.

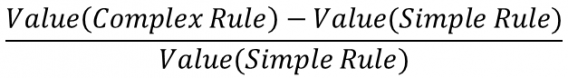

It’s also important to build a system in which you can measure particular nodes and isolate their effects, but also look at how different pieces fit together and interact. In doing so, you can also detect the marginal increases in complexity you add to a system, with say, more targeting rules.

Shanelle also quoted Scott Adams, the Dilbert merchant and self-proclaimed Trump persuasion analyst, but I’ll do so as well…

Losers have goals. Winners have systems.

2. Speed & Execution Over Perfection

I studied advertising and public relations at university, and if you’ve ever seen Mad Men, what they taught in university hasn’t changed too much since then. Big ideas, big campaigns, lots of upfront account planning.

If you’re reading this, you’re probably experienced at conversion optimization or at least the new landscape of digital marketing, so you realize the problem with this.

Of course, you can’t throw out the baby with the bathwater – I learned a ton that has been useful to me and will continue to be – but working at CXL taught me the value of speed over the crafting of the perfect idea or strategy.

After all, what good is a perfect idea if it never gets implemented? It’s essentially useless.

This is apparent in general, but this lesson became even more salient when we launched CXL Institute, essentially a startup within the company that required rapid iteration and learning. We couldn’t afford to spend weeks perfecting a marketing strategy, so we prioritized speed and execution and ended up learning very quickly what worked and what didn’t.

There’s clearly a balance here, which is why Zuck changed Facebook’s motto from “move fast and break things,” to “move fast with stable infrastructure” (though probably as much because of their company size increase as a philosophical change of mind). You can’t ship total crap in the name of speed. You have to set yourself up to learn. You have to run minimum viable tests…

3. Minimum Viable Tests (Finding Cheap Ways to Test & Learn)

Ideas are great, but execution is better. Similarly, ideas aren’t great unless they actually work, and we don’t know if they’ll work until we try them out. That’s a big benefit of experimentation, of course: the mitigation of risk and the encouragement of innovation.

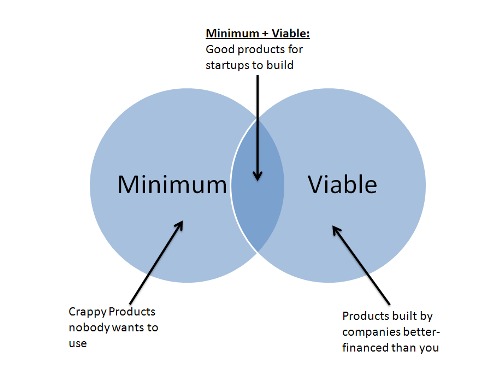

But you can take this idea a bit broader with the idea of minimum viable tests. I’m stealing the concept, of course, from the Minimum Viable Product ethos:

With minimum viable tests, you’re essentially asking the question, “what’s the cheapest and fastest way that I can gather enough data to make an informed decision?”

You can ask this with:

- Marketing campaigns

- Emerging channels

- Product design

- Management processes

- Sales scripts

…and anything, really.

In optimization, we’re essentially operating in a realm of natural uncertainty, and what we’re trying to do is determine how much uncertainty we can reduce with data (say, from extra conversion research to come up with better hypotheses or from an experiment itself and the certainty we gain implementing an experience).

Beyond that, though, we need to ask, “what’s the value of the reduction in uncertainty?” and furthermore, “is that value greater than the cost of the time and resources?”

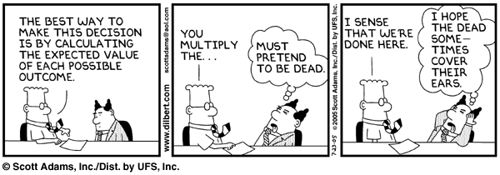

What I’m getting to here is that at the heart of optimization is decision theory, and I can use the concept of expected value to explain that.

Here’s a simple demonstration. Let’s say I give you the opportunity to roll a die for $1, and every time you roll a 3 you win $5. Is it a good decision to take the roll?

In this case, no.

To calculate the expected value, we can take the win rate (⅙) times the reward ($5), which gives us $0.83. That’s less than $1, so the expected value is less than the cost.

In conversion optimization, we generally have two levers in this equation: cost and impact. If the expected impact is greater than the cost, we can usually take the bet. But this works in marketing campaigns, too.

For example, let’s say you have an idea for a content campaign. What you’re going to do is set up a Facebook Ads University (you sell Facebook marketing services, or something). This is an expensive endeavor, obviously. Think of the time you’d have to spend with development, content curation, and promotion…

However, the impact is also quite high (or let’s assume it is). You can dramatically increase the amount of leads you’re generating while also using the content for those further along in the funnel as well as educating currently clients.

So what can we do? Reduce the cost of the experiment. In this case, we can do that in a few ways. To reduce uncertainty, there are a few things we could do:

- Put up a landing page for the university and gauge interest with targeted traffic.

- Find data from others who have implemented similar campaigns.

- Ask our audience what their interest in such a program would be.

- Run a minimum viable test using a proxy experience (in this case, a series of webinars).

All of these tactics are, in one way or another, an attempt at reducing the uncertainty of our decision to either implement the FB university idea or to abandon it. There’s a whole art, math, and science to this process, but I like to consider minimum viable tests that are 1) cheap 2) controllable 3) scalable and 4) imitate the actual experience that we’re testing.

For those reasons, in this case we’d probably want to use a series of webinars to perform a minimum viable test on the university idea. This way, you can gauge interest in the video education format, get practice with actual execution and production, and easily scale out the production if it works.

That’s just one example. You can use this mentality with any idea you come up with. Essentially, what’s the cheapest way we can test it out to see if it will or won’t work?

To paraphrase an old value investing adage, try to find opportunities to “buy a dollar at $0.50.”

4. In Times of Universal Deceit…

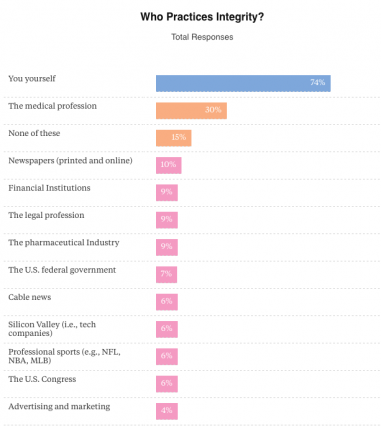

There’s a lot of bullshit out there in the marketing space – which, if you told that to anyone outside the marketing space, they’d say, “well, yeah, of course.” But still, it’s troubling.

It seems like growth and optimization, possibly because of their relatively high barrier to entry, have been affected more so than other crafts. We consistently see articles with titles like:

- 59 A/B tests that always win.

- How we got a 500% conversion lift.

- 99 experts reveal their #1 growth hack to 5x your business.

That’s the hyperbolic end of it, but it’s really no different when a conference speaker walks on stage and tells you something they know not to be completely true, but it’s something that makes them and their business look good. Intellectual dishonesty runs amok.

Even common wisdom can be sometimes misleading, or at least inadequate for your specific situation (and there are different “right” ways of doing things).

This results in some pretty poisonous conversion optimization myths, with those new to the industry left especially susceptible. But the good news is that, according to basic economics, because the supply of true and authentic content is so low, it’s hyper valuable.

In fact, I’ve been thrilled to work at CXL because of the level of intellectual honesty that runs through the company, from the top down.

Furthermore, because of the company culture here, our editorial style, and also those I’ve met while working at CXL, I’m motivated to carry authenticity and honesty with me as I move through my career. The industry can even continue to pump out BS content and ideas, but as long as there are strong voices to speak truth to power, we’ll be alright (and CXL will continue to be one of those voices).

You don’t have to practice “radical honesty,” but you should mean what you say and know what you’re talking about (or be okay with saying that you don’t know, and honestly trying to learn – humility might be a lesson except this article isn’t a book and has to end at some point).

5. Don’t Discount Small Wins

Let’s go back to two of my previous points quickly. One, there’s lots of BS content out there. Two, process is important to de-emphasize the individual idea or experience.

When we put those two together, we can almost entirely dismiss those “How we got a 1000% win,” blog posts as either lying, useless, or irrelevant. What are you going to do with that information?

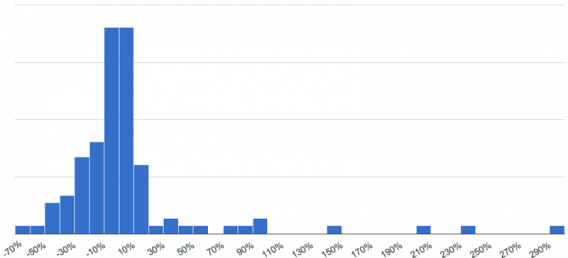

It’s actually quite rare to get impressive conversion lifts (see: Twyman’s Law. If it looks to good to be true, there’s probably something fishy going on). The fact is, at least according to data from Experiment Engine, most tests come up inconclusive.

But when you get a small win, assuming your test was run correctly and the results are valid, that’s no small victory.

You need to look at it from a higher level, and not just at the level of a single experiment. I actually can’t articulate it better than how Peep said it in a previous article:

Peep Laja:

“Here’s the thing. If your site is pretty good, you’re not going to get massive lifts all the time. In fact, massive lifts are very rare. If your site is crap, it’s easy to run tests that get a 50% lift all the time. But even that will run out.

Most winning tests are going to give small gains—1%, 5%, 8%. Sometimes, a 1% lift can result in millions of dollars in revenue. It all depends on the absolute numbers we’re dealing with. But the main point in this: you need to look at it from a 12-month perspective.

One test is just one test. You’re going to do many, many tests. If you increase your conversion rate 5% each month, that’s going to be an 80% lift over 12 months. That’s compounding interest. That’s just how the math works. 80% is a lot.

So keep getting those small wins. It will all add up in the end.”

This point also emphasizes the importance of a process, and the importance of improving our input levers like test velocity. Ronny Kohavi, GM and Distinguished Engineer at Microsoft, and Stefan Thomke, professor at Harvard Business School, just wrote about this in a great article for HBR:

“More than a century ago, the department store owner John Wanamaker reportedly coined the marketing adage “Half the money I spend on advertising is wasted; the trouble is that I don’t know which half.”

We’ve found something similar to be true of new ideas: The vast majority of them fail in experiments, and even experts often misjudge which ones will pay off.

At Google and Bing, only about 10% to 20% of experiments generate positive results. At Microsoft as a whole, one-third prove effective, one-third have neutral results, and one-third have negative results.

All this goes to show that companies need to kiss a lot of frogs (that is, perform a massive number of experiments) to find a prince.”

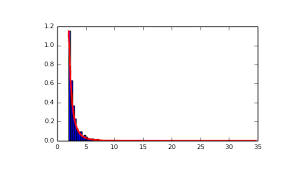

6. The Gap Between Those in the Know and Those Not is Massive

The difference in testing program sophistication between those just getting started and Amazon is massive. It’s apparent by our conference attendee feedback that a small percentage is very advanced while most are not. Most websites don’t have the traffic and resources to run massive amounts of tests, but often the best advice is aimed at sites that do.

There’s an unequal distribution of knowledge and skill, which is totally fine, but it’s a good thing to know when you’re taking advice or trying to apply someone else’s learnings to your situation.

That’s all to say: there’s no substitute for actually knowing what you’re talking about.

In certain disciplines, conversion optimization being one of them, it’s almost impossible to fake your way through an article (especially a 3000 word CXL article). There’s no way you can get around actually learning CRO.

If the skill level of any given field resembles a Pareto distribution, I’d much rather be in the small percentage of people that are the most advanced (and if I’m not there, having the honesty and humility to admit that and learn from those who are).

This isn’t something you can just say and choose, though, it takes blood, sweat, tears, and focus. It takes a long time to master a single aspect of conversion optimization, such as A/B testing.

After we’ve run our first few tests, it’s not time to celebrate our brilliance and achievement, but rather to put our heads down, be humble, and continue improving and learning (the alternative would be to succumb to Dunning-Kruger).

7. The Rabbit Hole Runs Deep

While I’ve learned a ton here at CXL – about content, growth, CRO, analytics, and so many tactical things I’ll bring forward with me – there’s always more to learn.

Every time I’ve started down a path to learn something new, after the first hurdles, trips, and struggles, when I first start to get some semblance of true understanding, I’m always amazed at how much more there is. While I’ve learned a lot, there are massive holes in my knowledge and skill set and there will always be gaps.

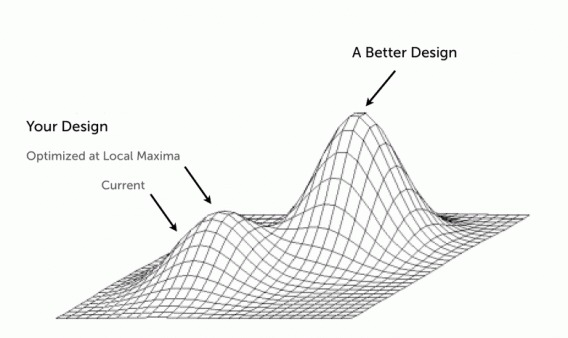

I could be wrong about this, but it seems that many people will go to the point where they’re “passable” and simply maintain their level of skill and knowledge (your own personal local maximum, if you will) But as Shanelle wrote, “If you’re not getting better, you’re getting worse.”

That’s why it’s so important to invest in yourself in terms of further education (see: CXL Institute), new experience, and opportunity. If you don’t feel challenged and stretched, it’s likely you’re plateauing. You’ve gotta keep the muscles guessing to continue achieving gains.

There’s so much more to learn, build, do. This is the beginning of a long journey for me, and I’m glad it started here.

Conclusion

I learned so much at CXL and it’s been quite an enjoyable experience working next to Peep and team (except for Peep’s tendency to throw pencils to get my attention…).

This list isn’t comprehensive, but these are the lesson that really stick out in terms of their impact.

As for me, I’m heading to HubSpot to work on growth. I’ll be digging through data, running experiments, and exploring traction at a different scale. Perhaps I’ll still write a blog post every now and then.

So thanks to Peep for giving me this opportunity, thanks to anyone who has read any of my articles (and especially thanks to anyone who has reached out because of them or commented), thanks to all the data, optimization, and growth experts I’ve met who have been so generous with their advice and expertise, and thanks to the CXL team for being smart, ambitious, and interesting.

Awesome write-up Alex, you learned a lot – make it happen at Hubspot and thank you for including me in some great articles you did at the CXL blog

Thanks, Ton! You’ve been super generous with your expertise and advice, and I really appreciate it

Alex, you will be missed, not just because your content is great but also because generally good guys are hard to find.

Best of luck and we will await seeing your next triumph.

Thanks, Andrew! You’ve been super generous with your advice and insights and it’s been invaluable. I’ve learned a ton from you in the last couple years, as well.

Great learnings (very familiar :-)) Alex! And all the best in your new job. It sounds like a great new adventure.

Thanks, Annemarie!

This is my 1st time commenting here, but your posts is really awesome and feels like you gain so much. I will be reading a lot here and will check out the institute.

Good luck on your next endeavor ;)

Thank you!

Hey Alex,

Great write up – as always. You’ve done a superb job here at CXL and I know you’ll kick some ass at HubSpot.

It was great meeting you at the last two CXL Live conferences. Also, thanks for all your help with the courses inside the Institute.

See you around!

Thank you – Hope to see you at the next CXL Live as well!

Alex, you have done amazing things at CXL and you will continue to do amazing things at Hubspot. It’s been awesome to see your growth, and I look forward to following the next chapter.

Thanks Tommy! I took a lot of cues from you on how to produce good content. Hope to bump into you at a conference in the future, possibly involving a good karaoke session

Looks like some great experience you have gotten working there.

Nice post, love the insights. Peep’s been around for a while so must have been great to work with him.

Thanks for sharing