No one ever said optimizing any website is easy. But at least if you’re working on an eCommerce site, you have clear metrics to hit. You have goals on which (probably) everyone agrees.

But what if you’re an analyst, optimizer, or digital marketer and working on a site without a clear conversion?

It’s a pretty different ballgame.

Table of contents

The Problems with Non-Financial Measurement

Let’s think of a few sites that might have this problem – sites that may provide information, or act as internal networks, or maybe just have fully offline businesses. Off the top of my head, I can think of:

- Universities

- Government agencies

- Intranets at large companies

- Content sites

- Nonprofits

- Large agencies

Of course, some of these can have financial conversions (e.g. donations for a nonprofit). But many times, they’re dealing with non-financial metrics or competing online goals.

You can even be a heavily commerce-based organization, like Edelman, but how do you measure success on a website where you can’t buy anything, or even request a demo or collect leads?

There are usually two large problems with organizations set up like this:

- They don’t consciously choose their online goals.

- They don’t consciously choose how to measure them.

The first is, of course, a big problem – and it’s not exclusive to sites without a financial conversion. But specifically with a site without a clear online conversion, they will often fail to answer the question, “what is our website used for?”

For the second point, because there’s no clear goal articulated, there’s no clear focus on what specifically to measure and optimize. According to Christopher D. Ittner and David F. Larcker writing for HBR, businesses tend to measure too many things when they don’t strategically choose what to focus on:

Christopher D. Ittner:

“Businesses that do not scrupulously uncover the fundamental drivers of their units’ performance face several potential problems.

They often end up measuring too many things, trying to fill every perceived gap in the measurement system. The result is a wild profusion of peripheral, trivial, or irrelevant measures.

Amid this excess, companies can’t tell which measures provide information about progress toward the organization’s ultimate objectives and which are noise.

A leading home-finance company, for example, implemented an “executive dashboard” that eventually grew to encompass nearly 300 measures. The company’s chief operating officer complained, “There’s no way I can manage my business with this many measures. What I’d really like to know are the 20 measures that tell me how we are really doing.”

What Are Your Goals?

In the Digital Analytics Power Hour podcast, the hosts posed the following thought experiment to help you determine how to analyze and optimize a site like this:

Imagine you wake up in a world where you don’t have a website. In this world, what would be your argument for creating one? What would you tell your boss to get them to invest in a digital presence?

This reframes things from scratch and makes you think about why you’re online in the first place. The answer could vary widely.

A government agency could exist to provide accurate information to individuals trying to find voting locations or their local DMV. A nonprofit could be there to promote awareness of their cause to the wider world. An intranet could be a portal of information and communication that leaves no employees in the dark.

Point is, thinking about and discussing this will help you move forward into choosing metrics, setting up reports, and optimizing based on insights.

One way to pinpoint your mission? The Digital Analytics Power Hour guys suggest looking at the mission statement. More specifically, isolate the nouns and verbs and you’ll have a good idea of why you exist online.

Best Friends Animal Society exist to “Save Them All,” and they focus on donations:

Feeding America is about “Working Together to End Hunger,” and it seems that is primarily done through donations (much easier to optimize for than informational goals):

Presumable, a site like Forbes has the goal of producing trustworthy and valuable business content (and ad revenue). They’d measure that by pages per sessions, attitudinal satisfaction metrics, and content consumption metrics (more on that in a bit):

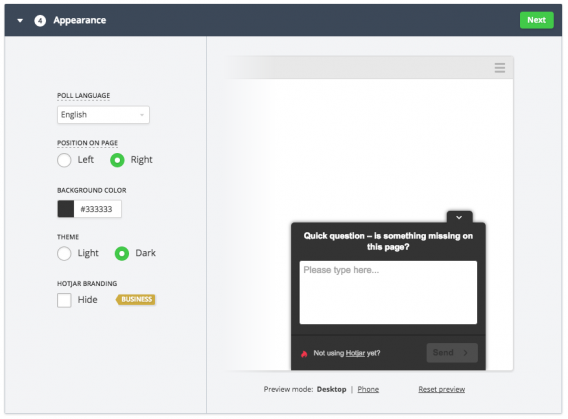

Another thing you can do is to find out why people are even coming to your site. Until you align what your site is doing with the actual reason people visit, you’re going to struggle. This is largely a qualitative endeavor. Throw a survey/poll up and find out. A popular way to infer that information is to ask if something is missing or if they can’t find a bit of info:

So think about your goals as an organization, especially online.

Once you’ve specified that, your important metrics shouldn’t be super hard to define. Trying to increase support and action for your nonprofit? Track donations. Trying to build a world class communications organization? Measure information consumption (especially gated like eBooks) and qualified candidates to the careers page.

Now let’s talk about how to specifically measure more nebulous metrics.

Improve the User Experience

If you can’t put a dollar value to your goals, it tends to be perceived as less important. Though that’s not true, it’s hard to altogether define the value of specific metrics if they don’t have a direct financial value. So your time on site is higher…is that a good or a bad thing? Traffic is higher – that’s probably a good thing, but do we know if it’s the right traffic?

It’s a lot foggier than saying you increase your average order value or conversion rate.

One way to measure positive change on your site, however, is to systematically improve the user experience. I’m sure there’s an example out there somewhere where someone quantifiably reduced the UX of a site and increased conversions – but I’ve never heard of such a thing.

Improving user experience isn’t fodder for a subsection of an article. There are courses on the topic. If you want some light reading on UX though, here are some recommendations to get you started:

- Great User Experience (UX) Leads to Conversions

- 8 Ways To Measure Satisfaction (and Improve UX)

- Usabilla’s User Experience blog

- User Testing’s blog

- MeasuringU

- Nielsen Norman Group

Then we, at CXL Institute, have been publishing a ton of free UX reseach lately: check it out.

Attitudinal Insights Matter

James Valentine, Former Conversion Optimization Lead at LDS.org, said, in addition to behavioral data like click-through, search queries, engagement, etc., they also use more attitudinal measures:

James Valentine:

“We also tested into ways to measure. For example, we created a “was this helpful? yes/no” widget that we would deploy along side some of our tests so we could see if changes we were making were moving a needle. We added a comment box after they said yes or no so we could start to understand better their intent, which helped fuel better hypotheses.”

Optimizing Information for Maximum Real Life Impact

You’ve got your site aligned with why users are visiting in the first place. You’ve got user experience research in place and are actively optimizing to increase key UX metrics. That’s an awesome start.

But how do you measure impact of a fully informational site?

The best example I can think of – the most challenging, anyway – is that of a church. So I asked James Valentine, Former Conversion Optimization Lead for LDS.org, and here’s how he explained it:

James Valentine:

“The product management teams didn’t always necessarily know what they wanted. I mean, when your site is all about changing and inspiring hearts and mind, there’s a number of different things a visitor might do that we might consider a successful visit to our site. So I tried to help break it down step-by-step.

For example, the homepage was showing content, the overall site might have a wide range of ultimate success metrics that we may or may not be able to track, but the homepage was presenting content so, shallow it might be, let’s start with click-through. Can we influence users with our images, with our headlines, etc? The clickthrough itself wasn’t that valuable of a metric in terms of conversions, but in terms of insights into what the user valued, it was extremely useful.

Then we started to zoom out a little. Since our ultimate goals weren’t very quantifiable, I leaned heavily on our analytics-testing integration (Adobe’s analytics for Target) to help quantify the trade-offs. We would run a round of tests and I’d place those results on a spectrum for the product team to prioritize.

These metrics were content-engagement oriented and included:

- Video view rates

- Percent of users who logged in

- Social shares

- Submitted feedback (included content/relevance/ratings)

- Percent who used the internal search

Some of these required extra analysis to be meaningful. For example, when trying different things with the navigation or removing on-page links we’d compare differences in internal search queries between test groups to see if we were ‘moving people’s cheese.'”

Measuring Information Effectiveness and Content Consumption

Non-commerce sites tend not to go past traffic acquisition and time-on-site. But there are certainly other measures.

Take this publishing company, Salem Eagle, for example. They have no real online conversions (other than Contact, perhaps). But they have tons of information about their conservative book publishing mission. I can only assume that a victory for them would be for interested parties to fully consume their “about” sections to each arm of their publishing branches.

I’m not sure if they’re trying to attract new members or if they’re working with existing authors (my guess is the former), but they can also use newer integrations with CRM data to determine if the right people are consuming the right content. They can also optimize based on qualitative feedback on-site surveys.

They could even put a Usabilla feedback form at the bottom of their informational posts and track quality over time quantitatively as well as collect qualitative feedback and possibly even requests for more information. This is what I meant above, too, when I mentioned that attitudinal metrics can be important in this context.

These measures, in combination with traffic, exit-rates, etc., might tell a fuller story in terms of the value they’re providing visitors.

To get even more granular, and especially for content heavy sites like government agencies, nonprofits, and content publishing sites, you can track content consumption with enhanced eCommerce. We’re doing it at CXL, and it’s incredibly illustrative as to what content people are actually consuming and at what rate.

Here’s an article on how to implement it.

What if You Have Multiple Goals?

Many sites have multiple goals. For simplicity’s sake, let’s use Universities as an example. They usually have three big goals:

- To be a knowledge base for existing students

- To acquire new student applications

- To receive more donations from alumni

Steven Gaittens, Digital Marketing Manager at Georgetown University School of Continuing Studies, says they have a Chief Digital Officer that oversees all of the technology and the website as a whole. A few people manage respective areas of the website and work with a good amount of autonomy.

Therefore, they can optimize different “sub-sites” with a high degree of relevance and accuracy to their visitors’ needs. For his role, here’s what he told me he works on:

Steven Gaittens:

“We have a series of goals in Google Analytics that we track conversions on, including the following: Request Information submissions, Event RSVP submissions, How to Apply button clicks, Application starts, Application submits, etc. We haven’t placed a monetary value on our goals so we don’t have any metrics like AOV, but we can track the effectiveness of our ad campaigns by the number of deeper funnel conversions they produce. We use campaign codes to track contacts that we’ve received through advertising to see if they end up becoming students.

For some of our advertising efforts that are less about hard conversions and more for creating awareness and promoting our brand, we look at engagement goals like average time on site, pages per session, and bounce rate. For our native advertising we look most closely at our scroll tracking goal, which fires when a visitor has reached the end of the article, signifying that they have engaged with our content (and hopefully enjoyed it).

So I guess the short version of my answer is that we do track our advertising efforts down the funnel. We pride ourselves on being a data-driven higher ed institution, so that’s really the only way to go about it.”

Sometimes, this level of autonomy with different arms of the same site could result in a confused approach with people measuring the same thing using different methods. As an HBR article put it, “It’s not uncommon for business units within the same company to use different methodologies to measure the same thing.”

But it can also work out perfectly well, especially if you have a manager ensuring homogeneity in analysis methods and reporting.

Conclusion

In a world where your metrics aren’t glaringly obvious, you can still unearth informative user data. You can still optimize a site and improve its performance.

Now, I started this article with the premise that a nonprofit would have the same goals as a University would have the same goals as a church. Not necessarily true. What all of these types of organizations have in common, though, is they need to deal with strategic ambiguity.

James Valentine summed it up well, stressing the importance of demonstrating a “level of understanding of how our users used the site, which was insight we didn’t have before.” Then you can reverse engineer a strategic approach to your testing program that lets you track, analyze, and improve metrics that matter for your organization.

Where can we get a dwell time checking script for this kind of stuff? In case there is no direct response needed, we would want a lesser dwell time, and in case not, a greater one? Seems like a pretty good system to me…