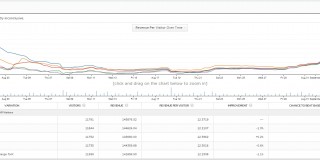

Statistical Significance Does Not Equal Validity (or Why You Get Imaginary Lifts)

A very common scenario: A business runs tens and tens of A/B tests over the course of a year, and many of them “win.” Some tests get you 25% uplift in revenue, or even higher.

Yet when you roll out the change, the revenue doesn’t increase 25%. And 12 months after running all those tests, the conversion rate is still pretty much the same. How come?